In Part 2, we began our review of Match algorithms. We covered the original match algorithm; how it encouraged students to submit dishonest rank lists; and the student revolt that led to a better matching process. It was a good time, and if you haven’t read it yet, you’re gonna want to get caught up before we move on.

We left off in 1975, right after mathematician David Gale sent a letter asking whether the NRMP’s matching algorithm was the optimal solution that he and Lloyd Shapley had proposed to the so-called Stable Marriage Problem.

Proposals

See, Gale and Shapley had shown that there wasn’t just one solution to the Stable Marriage problem. There were actually two. The solutions were mirror images, with the outcome dependent upon who proposed to whom.

If you let the men propose to the women, then the outcome is a stable match in which the men get their best possible outcome. In this scenario, the women get their least preferred stable match.

However, if you let the women propose to the men, then the outcome is reversed. You get a stable matching where the women get their best possible outcome (and the men their worst stable match).

You can see the impact of proposals on the Match in the following simple example.

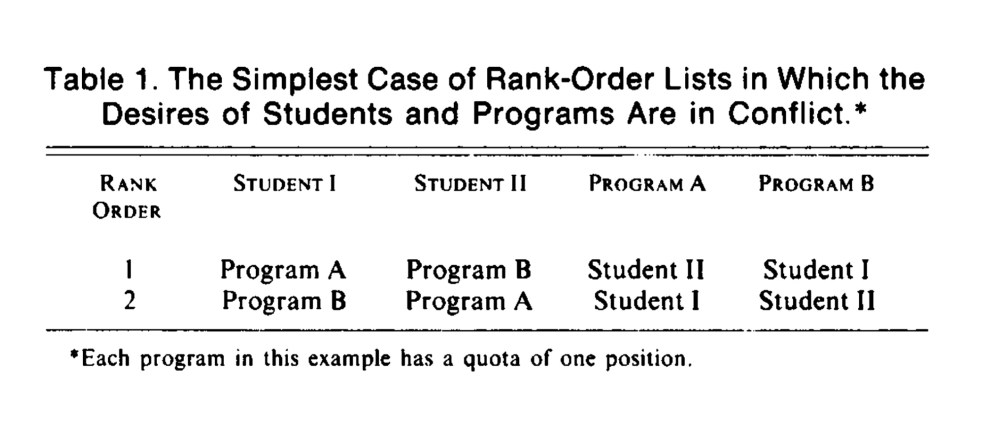

Imagine a world with just two hospitals and two applicants. Student I wants to match at Program A; Student II wants to match at Program B. Unfortunately, the hospitals have the opposite preferences.

The simplest possible case in which who proposes to whom matters. From Williams KJ, et al. N Engl J Med 1981; 304: 1165-1166. PubMed

If we allow programs to propose to students, the match goes like this:

- Program A proposes to Student II. Student II has Program A ranked on her list, so they match.

- Program B proposes to Student I. Student I has Program B on his list, so they match.

On the other hand, if we let the students propose matches to the programs, then the Match gives the opposite result:

- Student I proposes to Program A. Program A has Student I on their rank list, so they match.

- Student II proposes to Program B. Program B has Student II on their list, so they match.

Notice that, in either case, the outcome is stable. We can’t pair up the applicants in another way in which both parties prefer the outcome to the outcome they received. However, whichever party is allowed to propose is guaranteed of receiving their best possible outcome.

So who received their optimal outcome in the NRMP’s match – students or programs?

In 1975, the NRMP’s mathematical consultant, Elliott Peranson, responded to Gale’s letter.

In the third paragraph, the NRMP frankly acknowledged that its algorithm produces “college-optimal” (i.e., hospital-optimal) matches.

The letter above was not made public until 2007. In fact, until the mid-1990s, the NRMP refused to even acknowledge that its algorithm favored hospitals. And if it were not for the work our second unsung hero, the NRMP would almost certainly still be using this algorithm today.

Unsung Hero of the Match, #2

Today, Dr. Kevin Jon Williams is a full professor of medicine and noted atherosclerosis researcher. But in the late 1970s, he was just a Johns Hopkins medical student who wanted to couples match with his wife.

The NRMP’s messaging claimed that their algorithm ensured the best possible outcomes for students, and recommended that students rank programs honestly. But Williams wanted to be sure. So he sat down and pored over the bulletin of information the NRMP provided to students.

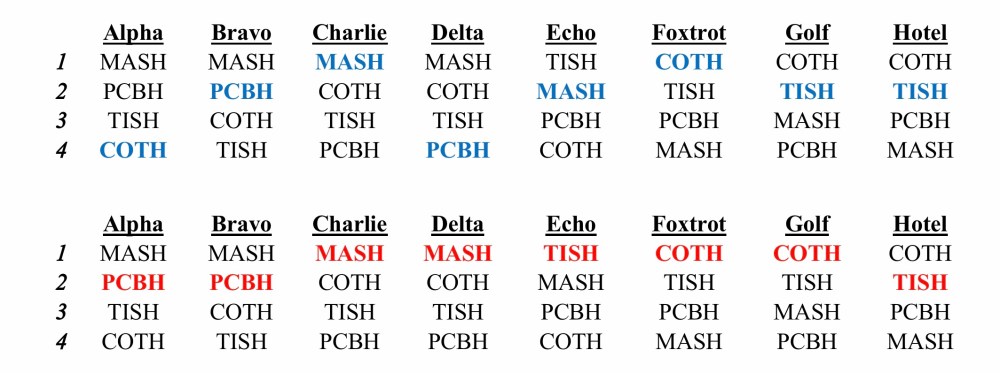

The 1980 NRMP Directory contained a hypothetical matching example of 8 students and 4 hospitals. The example was kind of cumbersome, with hospitals named “MASH,” “COTH,” “TISH,” and “PCBH,” and applicants named using the NATO phonetic alphabet. Most students probably didn’t have the patience to work through it – but when Williams did, he had a startling realization.

The Match algorithm was hospital-optimal. In fact, if you used a student-proposing matching process with the NRMP’s own matching example, then many of the students would have ended up with a better match.

Student outcomes using a hospital-proposing algorithm (blue) versus a student-proposing algorithm (red), using the hypothetical example from 1979 NIRMP Directory. (For readers interested in a deeper dive, I have the fake hospitals’ rank order lists and a little more discussion here.)

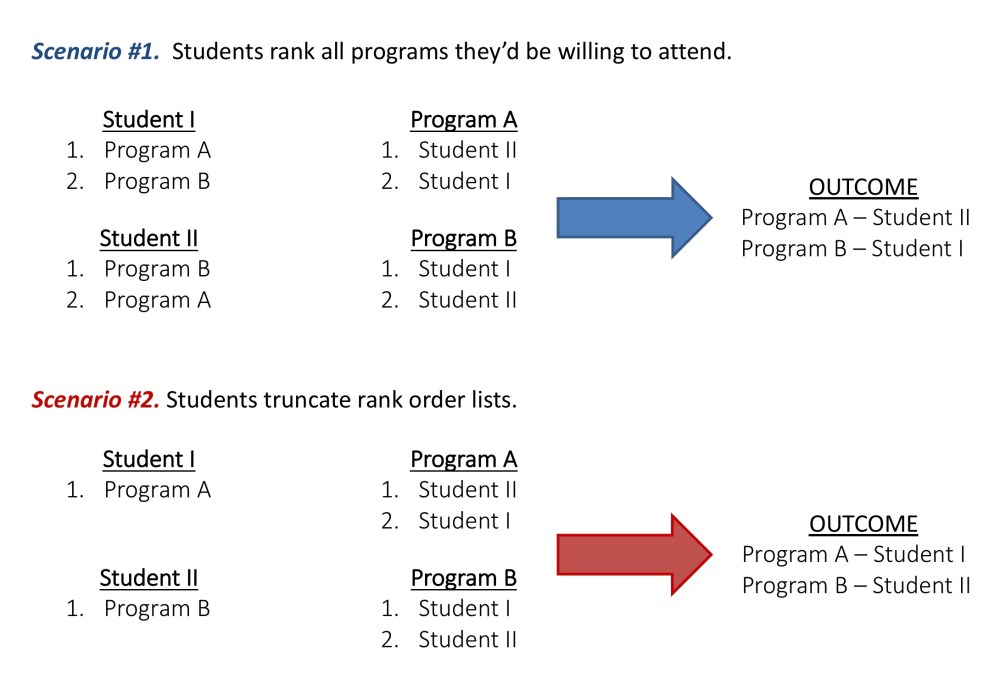

But there was another problem. Using a hospital-proposing system gave students an incentive to shorten their rank list to get a better match. To understand why, let’s look again at the two-hospital, two-applicant example from above.

When matching is done with a hospital-proposing algorithm, it’s possible for students to improve their matching outcome by truncating their rank order list.

The example above is simplistic – but the principle holds for more realistic match scenarios. By shortening their rank order lists, applicants can create a cascade of declined proposals that result in hospitals going farther down their rank order list – and potentially giving applicants access to more favored programs.

Thing is, this is a very risky strategy. Suppose only Student I truncated her rank list, but Student II ranked both programs. In that scenario, Program A will match Student II… and Student I will go unmatched.

Was this really a match that benefitted students?

A warning shot

In 1981, Williams aired his concerns in an editorial that appeared in the New England Journal of Medicine.

Williams and his student co-authors concluded that

The choice of … algorithms should be decided publicly between students and programs. However, all parties are entitled to be informed of the bias of the present algorithm toward programs and the availability of workable … alternatives.

It was the kind of piece that should have caused the NRMP to scurry to action. At the very least, they could have studied a student-optimal algorithm using real rank order lists and published the results, so that stakeholders know how often the results would change.

Instead, the NRMP did almost nothing.

(I say “almost” because the NRMP did do one thing in response to the NEJM piece: they removed the matching example and the detailed description of their algorithm from the materials distributed to students, thus making it nearly impossible for subsequent generations of students to discern that the NRMP’s algorithm systematically favored hospitals.)

By the time it became clear that the NRMP had absolutely no intention of doing anything in response to the NEJM paper, Williams was years past graduation and launching his academic career. He had nothing to gain from attacking a well-connected bureaucracy. Most of us, I think, would have let this issue die. But Williams wasn’t like most of us.

Skirmishes

For the next decade, Williams tried to get another journal interested in publishing a another piece on how the NRMP’s algorithm systematically favored hospitals over students. He failed – repeatedly.

Every time he would submit the manuscript, the journal editors would invite reviewers with ties to the NRMP – such as noted economist and NRMP consultant Alvin Roth – and the manuscript would be rejected.

See, the NRMP claimed that Williams concerns only applied to a “simple” model. But even though it was hospital-proposing, the NRMP’s algorithm included some little quirks, like allowing couples to match together, or allowing applicants to simultaneously match in two positions (i.e., preliminary and categorical PGY-2 positions). So the NRMP denied that the Match truly produced hospital-optimal results.

Technically, that’s true – but it sidestepped the big picture issues that Williams raised. How often were students disadvantaged by the NRMP’s algorithm? Was it honest for the NRMP to advertise their algorithm as providing the best-possible outcomes for students? And was it appropriate for the the NRMP to advise students to rank all programs, when submitting a shorter list could lead to better outcomes?

All of this discourse took place behind the veil of peer review. The NRMP chose not to engage in a public debate or conduct a formal study (even though they were the only ones in a position to do so). So Willams kept picking at it, year after year.

The final showdown

Finally, Williams struck a deal with the editor at Academic Medicine. The editor agreed to send the paper to an independent mathematician for review. If the mathematician said that Williams’ concerns were valid, then the journal would print the paper and give the NRMP the opportunity to write a counterpoint.

And so it came to pass that Williams aired his grievances in Academic Medicine in 1995.

Casting doubts on the NRMP and its algorithm, which are key to a process that affects thousands of students and residency programs, is not something that should be done lightly or unconstructively. Nevertheless, I believe it is necessary to raise questions about the algorithm and the official descriptions thereof because of the major difficulties with the algorithm – that it is biased in favor of hospitals over students and that it contains incentives for students to misrepresent preferences – have been known by the NRMP since at least 1976 and 1984, respectively. Yet the NRMP has not responded to the serious issues that these difficulties raise. (The issue of the bias of the algorithm was pointed out publicly long ago by my colleagues and me.) Even granted that large organizations that affect the careers of so many must move cautiously to make changes, so much time has passed that it is clear the NRMP is choosing not to act.

Williams KJ. Acad Med 1995; 70(6): 470-476. PubMed

The article was an academic bombshell. Just the correspondence it generated is among some of the liveliest that I’ve seen in the academic literature (such as the letters here, here, and here).

In the uproar that followed, the San Francisco Match – used by ophthalmology applicants – moved quickly to study how often applicants might be disadvantaged by their own program-optimal algorithm. It was the same simple study that the NRMP could have conducted at any point over the past 20 years, but chose not to.

The results were encouraging. Because the preferences of applicants and hospitals were often concordant, it appeared that situations in which students were disadvantaged by a hospital-proposing match occurred infrequently. Nonetheless, the San Francisco Match rapidly changed their algorithm to the applicant-optimal version.

The NRMP, in contrast, continued to drag their feet.

But unlike in 1981, this time, they couldn’t ignore the problem. Williams’ article garnered significant attention and generated a major public outcry. Both the AMA and the American Medical Student Association (AMSA) got involved, taking out advertisements, mobilizing students and physicians, and demanding change.

An advertisement from the October 1995 AMSA New Physician.

And so, in 1997, the NRMP relented. The algorithm was changed to the student-proposing version of the Gale-Shapley algorithm (with a few modern tweaks to allow linking preliminary and categorical positions and allowing couples to match), and rebranded the Roth-Peranson algorithm. And this, of course, is the algorithm that remains in use today.

The NRMP algorithm is now applicant-proposing. You can see how it works in this video.

Postscripts

- In 1997, the NRMP finally published the results of a study that demonstrated that Match outcomes were almost always the same whether a program-proposing or an applicant-proposing method was used. For physicians who matched before 1997 and wondered if their career would have been different if a student-optimal algorithm had been in place, that was great news. (Of course, the fact that the NRMP took 20 years to do the study was a little less great. And the fact that during most of this time they denied that there could possibly be an issue – and worked to suppress those whose rigorous analysis suggested that there might be – wasn’t great at all.)

- In 2012, the Nobel Prize for Economics was awarded for the work that underlies the NRMP Match. The prize was shared by Lloyd Shapley and economist Alvin Roth (who adapted the Gale-Shapley model to allow couples to match).

- Because the Nobel cannot be awarded posthumously, David Gale – who passed away in 2008 – did not share the Nobel Prize. (The Harvard medical students who led the 1951 uprising against the match – and who seemingly independently arrived upon the solution to the Stable Marriage problem a decade before Gale and Shapley – were also unrecognized.)

- In 2003, Roth wrote an article in JAMA celebrating the history of the Match and its algorithm. None of Williams’ articles were cited, and the shift from a program-optimal to an applicant-optimal algorithm was carefully glossed over. However, if you want to hear Dr. Williams tell his story about what it took to change the algorithm, here’s his talk provocatively titled, “Mathematical clarity versus an entrenched, out-of-touch bureaucracy: how we reformed a small, well-run match – and then the largest but badly run match.”

So in most ways, this is a story with a happy ending. Thanks to the efforts of Hendren and Williams, the NRMP algorithm is now student-optimal. Students can rank programs in their true order of preference without being penalized, and have no incentive to try to truncate their rank order list or otherwise game the system.

Left unaddressed here is why the NRMP was so slow to respond to Williams’ concerns – and what that tells us about the NRMP’s business model and the future of the Match. (But we’ll cover that in Part 4.)

You may also like:

The Match, Part 1: Why do we have a Match?

The Match, Part 2: Getting under the hood – how does the match work?