To fix Application Fever – the steadily-rising number of residency applications submitted by medical students – some say we don’t need caps. Instead, they claim that students just need more information. Give them better data about how many applications to submit, and they’ll stop overapplying. Or so the logic goes.

I’m on record as supporting application caps. But whether you do or you don’t, we should be able to agree on this: for informational strategies to work, they have to actually be informative.

The AAMC’s Apply Smart campaign is not.

I highlighted several problems with Apply Smart in Part I. But even if the AAMC dropped the fearmongering and addressed the bias present in their analyses, their figures still wouldn’t be that helpful.

Why?

They’re asking the wrong question.

Here, in Part II, I’ll highlight the most fundamental problem with the Apply Smart analyses, and suggest what we can do to give students the information they really need.

A thought experiment

Suppose that two equally-talented high schoolers apply to college.

One applies to 20 schools. One applies to 2.

Who has the better chance of getting in?

The answer is, it depends – right?

Which student has the greatest risk of “going unmatched” with her college applications?

The girl in the orange sweater applied to 10 times as many schools as her classmate, but all of them are highly selective. Unless she is a very strong applicant, she might be rejected or wait-listed at all of them. The girl in yellow applied to only two schools – but one is highly likely to accept her.

It should be obvious that the likelihood of admissions success is not primarily determined by the number of schools to which an applicant applies. The nature of those schools matters more.

This is the fundamental problem with Apply Smart. Simply analyzing the number of applications submitted won’t yield much useful information, even if the analyses are done well.

Better data, better decisions

There’s a reason that we don’t see capable high schoolers going “unmatched” in their college applications. In part, it’s because there are lots of colleges. But that’s not the only thing.

College applicants also have access to better data. When high schoolers try to figure out where to apply – and where they might get in – they can turn to their guidance counselor, online calculators like this or this, or data-rich publications like The Princeton Review, among others. With a little research, it’s easy to get an idea about where your application will be competitive – and where you don’t have a chance.

In contrast, the average medical student student must draw from such resources as:

- The biased Apply Smart graphics

- N=1 testimonials from classmates

- Vague statistics from the few program websites that provide them

- Speculation and anecdote on Reddit or Student Doctor Network

- Faculty advisors whose stale knowledge is based on their own experience 15-20 years ago

Why don’t medical students have access to better information?

Wouldn’t it be nice if applicants had access to standardized statistics on how many candidates like themselves ended up matching at a particular program? Then, they could make more informed decisions about where to apply.

Unfortunately, many residency programs provide next-to-zero useful information to applicants to help them gauge their competitiveness. Applicants have asked for more information for years – but programs have been reluctant to provide it.

Why?

Appearances – Some program directors (PDs) are hesitant to publicize their statistics for fear that it will hurt recruiting. They worry that “top candidates” who see a low mean USMLE score will feel overqualified and decline to apply – which would jeopardize their ability to recruit the residents they want.

Unintended consequences – If programs were required to release standardized information on their entering class, it would certainly be used by others (like U.S. News & World Report or Doximity) to factor into their residency rankings. If this happens, you can expect residency programs to focus even more on measurable metrics of prestige (USMLE scores, school prestige, AOA, etc.).

Confidentiality – It’s okay for Yale to report SAT and ACT data for their 1,578 undergraduate freshmen. And it’s probably okay for Yale’s internal medicine residency program to list the mean USMLE Step 1 score for the 36 categorical residents who enter each year. But what about their 5 incoming dermatology residents? The 3 plastic surgery PGY-1’s? The 2 neurosurgery interns? At a certain point, these statistics can’t be released without compromising confidentiality.

Meaning – Suppose a program lists their mean Step 1 score as 240. What does that really tell you? It could mean their residents have scores ranging from 205 to 265. Or it could mean they screen out everyone <235. Or maybe every single resident who had a Step 1 score below the mean had incredible research experience or a favorable away rotation. The decisions that lead to a candidate’s ultimate ranking are often so individualized that even if summary statistics were provided, they probably won’t be that informative to students.

How do we give students the information they really need?

Instead of focusing on the relationship between number of applications submitted and residency entry (which doesn’t factor in the competitiveness of the programs) or publishing statistics on the entering class (which is fraught with the issues listed above), we should provide a different type of information to students. We should focus upon the probability that a given application will result in an interview offer.

Unlike the relationship between number of applications submitted and residency entry, the relationship between the number of interviews conducted and the likelihood of matching is highly predictable.

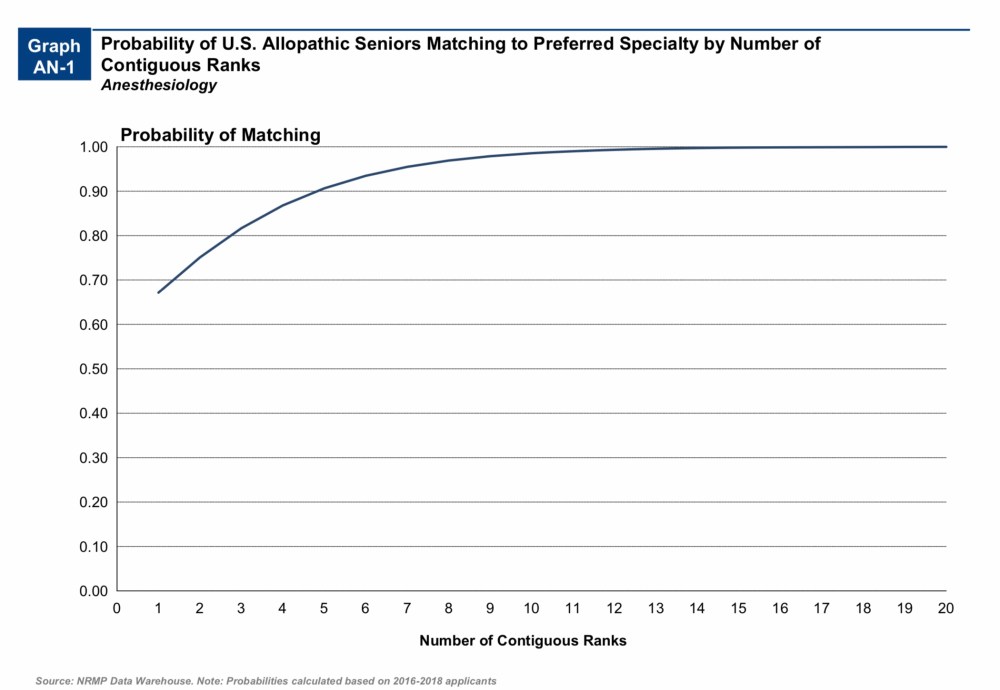

In their “Charting Outcomes in the Match” reports (archived here), the National Resident Matching Program (NRMP) publishes data on the probability of matching based on the number of ranks an applicant submits.

(n.b. – Because students may interview at programs they choose not to rank, the number of ranks is not a perfect surrogate for the number of interviews completed. But it’s pretty close.)

Take, for instance, U.S. allopathic students entering anesthesiology.

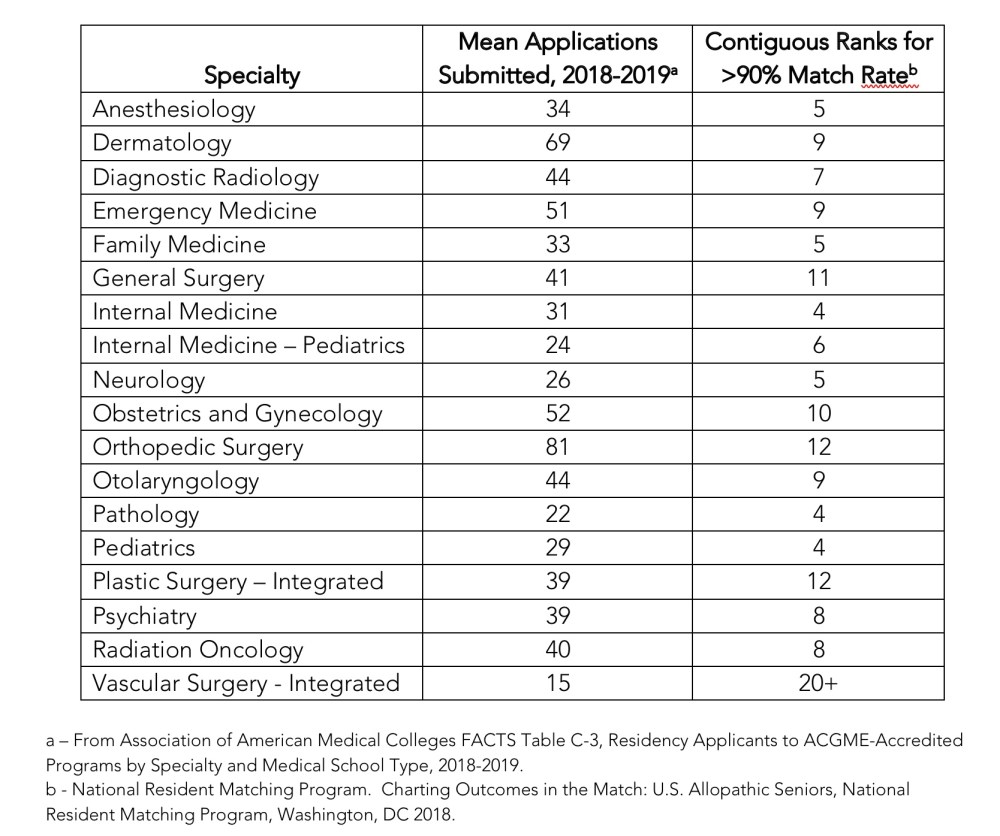

The average applicant in anesthesiology applies to 34 programs. But candidates who rank 5 or more have a greater than 90% chance of matching successfully.

But maybe you’re not interested in anesthesiology. Maybe you’re trying for a competitive specialty like dermatology, where the average candidate submits 69 applications. But if you interview at and rank 9 programs, you too will enjoy a 90%+ chance of Match success.

The same pattern holds for almost every specialty for which the NRMP has data, as you can see from the table below. If you rank 4-12 programs, your chance of matching is better than 90%. (The only exception is vascular surgery, where the asymptote never goes above 90%. I think this anomaly reflects “competition” with general surgery programs for candidates who don’t match at their first choice integrated vascular surgery program.)

Note that the figures in the table above are for U.S. allopathic seniors. However, the NRMP also provides data for both U.S. osteopathic seniors (DOs) and international medical graduates (IMGs).

The data reports for DOs and IMGs are somewhat more limited than those for U.S. MDs, because fewer applicants from those backgrounds match in some of the more competitive specialties. However, for most disciplines, we see the same pattern that we found above.

For instance, 52% of IMGs enter residency programs in internal medicine. There, candidates who rank 9-10 programs have a >90% Match rate.

American DOs have to rank a few more programs than their MD counterparts to achieve the same 90%+ threshold – but the numbers are comparable (i.e., 6 for anesthesiology, 10 for radiology, 14 for general surgery, etc.).

The point is this:

Applicants – even IMGs, or MDs applying to competitive specialties – do not need to apply to 100 programs. They need to apply to 10-12 programs where they’ll be offered an interview.

We just have to help them figure out which programs those are.

Can we do it?

I say yes.

It’s technically possible.

All we need to know are which students received interview offers from which programs. Then we can use information from their applications as predictors in multivariable logistic regression models that will spit out the probability that a similar candidate will be offered an interview.

It wouldn’t require the participation of programs.

These models could be built without any input from the programs – we could just put in the data and let the statistical software select the variables and determine their weight in the model. However, if program directors were willing to participate in the model building, the probability estimates will be much more precise.

Programs have an incentive to help with model building.

There is absolutely no need for PDs to receive hundreds of applications from students who have no chance of being offered an interview. It wastes their time and prevents them from being able to focus on the type of applicant they’re trying to recruit.

If a program isn’t going to interview students who have failed Step 1, or non-U.S. citizens, or any other particular group, then they should make that clear – so that the presence of those factors results in a 0% probability estimate.

Confidentiality/anonymity are better preserved.

Because not every candidate who is offered an interview is ranked (either because he or she declines the interview invitation, or attends the interview and is found unsuitable for the program), the number of candidates who receive interview offers is much higher than the number of matched residents. Thus, even for small specialties, there is a robust sample size to provide meaningful statistics without identifying specific residents. (For instance, in 2018, the average residency program that filled in the Match ranked 13.1 applicants for every position.)

There is a (potential) working prototype.

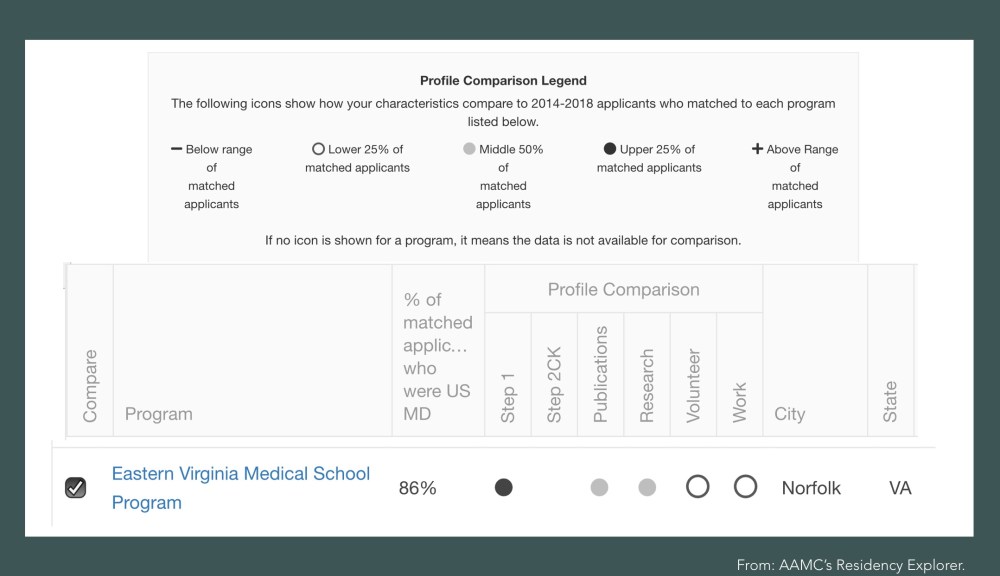

The AAMC recently unveiled a working prototype for a program called Residency Explorer. It allows students to input their application data (albeit only crudely) and see how their profile compares against the profile of residents who entered that program.

Unfortunately, the information that Residency Explorer provides is hard to interpret. If your Step 1 score is in the top 25% for matched residents, but the number of volunteer and work experiences you report is in the bottom 25% – which matters more? Are you likely to get in or not?

Data from the AAMC’s Residency Explorer. Will this student be offered an interview?

Replacing these vague figures with an estimate of the probability of being offered an interview will make it much easier to curate a list of applications likely to result in the 4-12 interviews needed for a 90%+ match rate.

Will this fix Application Fever?

Maybe not. But it’s still the right thing to do.

After all, it’s possible that reporting these probabilities will encourage some students to apply to even more programs. Some students – especially borderline applicants, or those applying in highly competitive specialties – may realize that it’s impossible to find 10-12 programs likely to grant an interview, and apply to every single program in the specialty.

We still need to give students the information. Only then can they make an informed decision about whether it’s worth spending thousands of dollars to apply to 100+ programs when their chance for success is low.

Some will choose to apply. Others will choose to pursue a more achievable career pathway, or take a year to enhance their application and reapply.

Similarly, some students highly value the chance to be at a prestigious program, and if they see their probability of interviewing is low, they’ll pay to apply to every single “big name” program in the country to maximize their chances.

That’s okay with me, too.

What I’m not okay with is students being financially plundered and program directors getting buried so deep in applications that the only way to evaluate their future colleagues is with convenience metrics – all while we sit around and act as if there’s nothing we can do. It benefits no one (other than the AAMC’s accountants and executives) when students submit 10 times as many applications as they need to match, or submit applications to programs where their application won’t even be read.

We’re not stuck with the low-value information we use today. If we want to cool Application Fever, we can’t just ask students to Apply Smart – we have to give them the data they need to apply smarter.

YOU MIGHT ALSO LIKE:

What’s the Antipyretic for Application Fever?

Applying Smarter, Part I: Breaking Down the AAMC’s Apply Smart Campaign