Surely every resident has had the experience of trying to explain to a patient or family what, exactly, a resident is. “Yes, I’m a real doctor… I just can’t do real doctor things by myself.”

In many ways, it’s a strange system we have. How come you get to call yourself a doctor after medical school, but you can’t practice independently as a physician until after residency? How – and why – did this system get started?

These are fundamental questions – and as we answer them, it will become apparent why some problems in the medical school-to-residency transition have been so difficult to fix.

In the beginning…

Go back to the 18th or 19th century, and medical training in the United States looked very different. Medical school graduates were not required to complete a residency – and in fact, most didn’t. The average doctor just picked up his diploma one day, and started his practice the next.

But that’s because the average doctor was a generalist. He made house calls and took care of patients in the community. In the parlance of the day, the average doctor was undistinguished. A physician who wanted to distinguish himself as being elite typically obtained some postdoctoral education abroad in Paris, Edinburgh, Vienna, Berlin, Hamburg, or Munich.

The Pennsylvania Hospital – founded in 1751 – was the first hospital in the United States to have resident physicians.

Obtaining this type of experience was essential for obtaining a prestigious position in the medical community. If all you wanted to do was prescribe elixirs, poultice wounds, and perform minor surgical procedures on the patient’s kitchen table, then sure, your standard undergraduate medical education was sufficient.

But if you wanted to obtain an important position at a metropolitan hospital or join a medical school’s faculty, getting training in Europe was the way to do it. (In fact, in the years before World War I, almost every single faculty member at the most prestigious American medical schools – Johns Hopkins, Harvard, Yale, the University of Michigan – had undertaken medical training in Europe, usually in Germany.)

In part, this was because hospitals in Europe were much more sophisticated than those in the U.S., and could offer exposure to the most state-of-the-art therapies and forward-thinking faculty.

But there was another, less noble motivation for restricting elite positions to physicians who had trained in Europe. Obtaining training abroad was a privilege that only a few young physicians could afford. Limiting opportunities to those who had obtained it was a convenient way to restrict the upper echelons of the medical profession to a certain social class.

So when – and why – did U.S.-based residency training programs become a requirement for practice? It turns out that the development of residency training closely parallels two other trends in medicine.

1) The rise of the hospital

Before the Civil War, hospitals in the U.S. were few and far between. There just weren’t many places where you could get residency training, even if you wanted it.

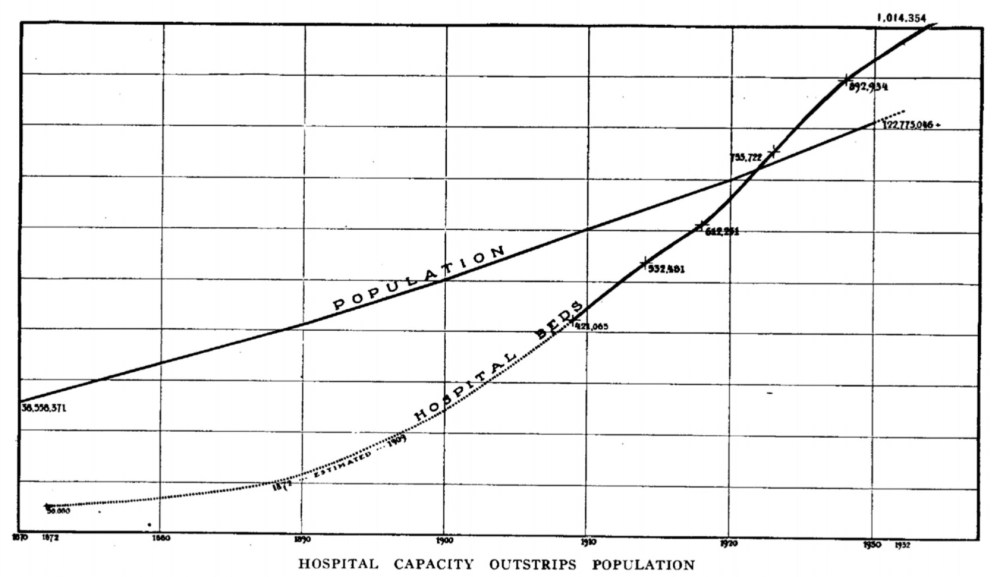

But between 1870 and 1914, new hospitals sprang up everywhere. Although rural areas still relied on physicians making house calls, most larger cities had several hospitals. Combined with the increasing urbanization of America, this meant that the majority of Americans now lived in close geographic proximity to a hospital.

Hospitals grew rapidly in the years after the Civil War. From JAMA 1933; 100(12):887-972.

Thing is, caring for patients in a hospital is complicated. They need attention at all hours of the day.

And in the telegraph era, it wasn’t practical to summon a doctor from the community every time that a patient needed their bandages changed or a new order for tincture of opium. You had to have a doctor on premises all the time.

This, of course, is the origin of the term resident. Resident physicians were so called because, yes, they lived in the hospital or on the grounds.

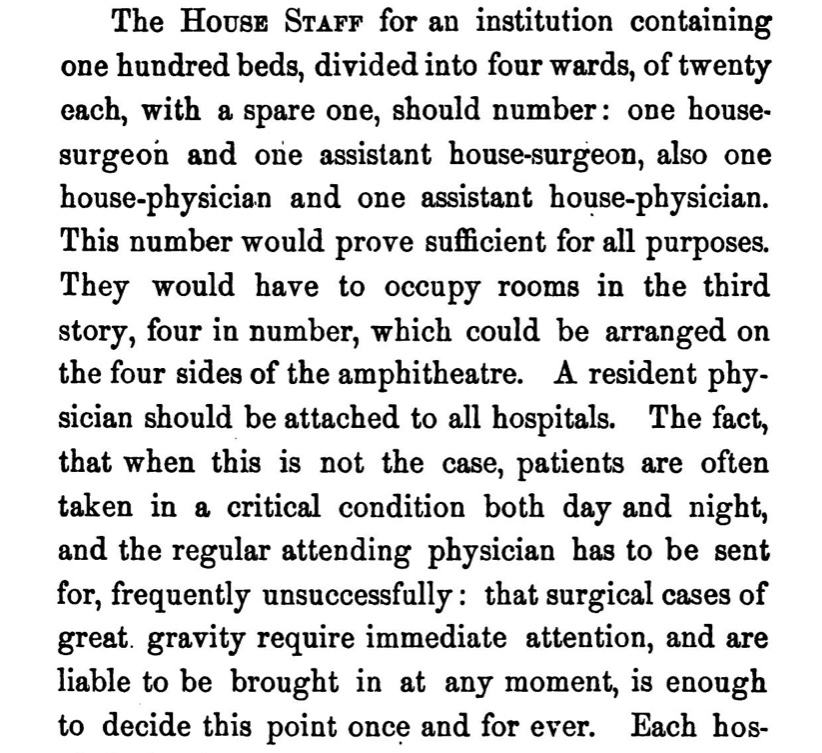

In Valentine Mott Francis’ 1859 A Thesis on Hospital Hygeine, which served as a blueprint for the construction of new hospitals, it was recommended that a hospital of 100 beds be staffed with two medical and two surgical residents.

If you’re wondering why the house staff has to occupy rooms on the third story, it’s because “in case of fire, they, being in good health, could easily escape.”

If you’re wondering why the house staff has to occupy rooms on the third story, it’s because “in case of fire, they, being in good health, could easily escape.”

_

In other words, it was the rise of hospitals – and their need for a workforce – that led to demand for resident physicians.

Still, by the early 1900s, only around half of graduating students pursued postgraduate medical training. (In part, that’s because entire hospitals could be staffed with just four resident physicians.)

More importantly, residency training was still largely viewed as being unnecessary. I mean, if you were a graduating medical student, would you voluntarily spend years living in the hospital, working grueling hours for little pay – or would you just hang out a sign and start practicing?

It’s remarkable how little is mentioned about residency training in the 1910 Flexner report – the landmark examination of American medical education. Flexner scarcely mentioned residency training at all – describing it once as an “undergraduate repair shop” – and seemed to view it as superfluous in the face of a quality four-year medical education.

So how, then, were graduating medical students convinced that residency training was necessary?

–

2) The rise of the specialist… and the specialty board

Medical student demand for residency training was driven by another trend: specialization in medicine.

In the old days, when you graduated from medical school, you were a Doctor. There was only one kind. By virtue of their educational attainment, all doctors were supposed to be able to do all the things that doctors were capable of doing.

If all physicians were to have the same scope of practice, then it was sufficient to give them all the same education in medical school. But as knowledge and technology advanced, more and more physicians began to limit their practice to certain areas.

Suppose you wanted to focus your practice on diseases affecting the eyes. You could do a residency at one of the handful of special eye and ear hospitals that existed in Boston, New York, or Chicago. And after you’d toiled away for some years – and learned the trade from the masters (who had in turn studied in Vienna) – then you could call yourself an ophthalmologist and open your own eye clinic.

But… if you wanted… you could become an ophthalmologist without doing all that.

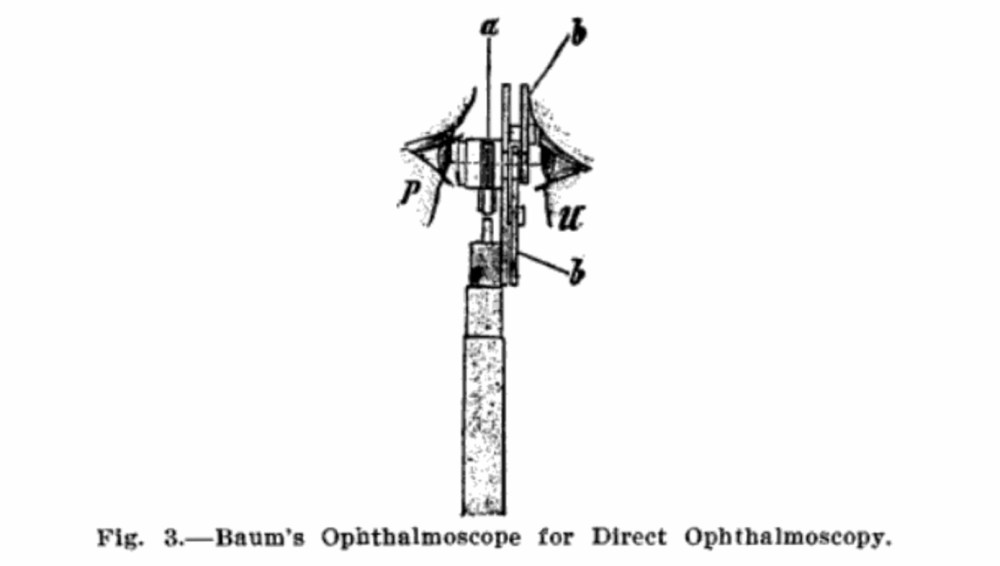

See, if it suited you better, you could just apprentice with an ophthalmologist until you felt good about your ophthalmology skills. Or you could take a short six-week course on eye diseases. But if even that was too much for you, have no fear – because in most areas, there was nothing keeping a general practitioner from billing himself as an eye specialist just because he picked up a battery-handle ophthalmoscope at the medicine show.

The invention of the battery-handle ophthalmoscope made it possible for any doctor to be an ophthalmologist. From The Ophthalmic Year Book, 1913.

For ophthalmologists who had spent years training in Europe, this was unacceptable.

[Ophthalmology] found itself infested with charlatans. The problem of inducing students to undertake graduate courses in ophthalmology had no obvious answer. Why should the average specialist, whose state license permitted him to practice any field he chose, devote two years to this needless pursuit?

-Cordes FC and Rucker CW. Am J Ophthalmol 1962; 53: 243-264. PubMed

Multiple potential remedies were considered.

Perhaps the most obvious solution would be for state medical boards to issue separate licenses for specialists. But this made board members squeamish. Asking the board to define – and police – the practice of ophthalmology would be cumbersome. Where, exactly, does the practice of general medicine end and ophthalmology begin?

And for their part, ophthalmologists were reluctant to surrender the gatekeeping for their specialty to a government agency. Weren’t the ophthalmologists themselves in the best position to decide who should become an ophthalmologist?

The solution arrived upon changed the course of graduate medical education.

Residency-trained ophthalmologists chose to establish the first specialty board – the American Board of Ophthalmology – and the first board exam. (Naturally, the need to regulate entry to the specialty was presented with arguments related to patient safety, not economic protectionism – though both were certainly in play.)

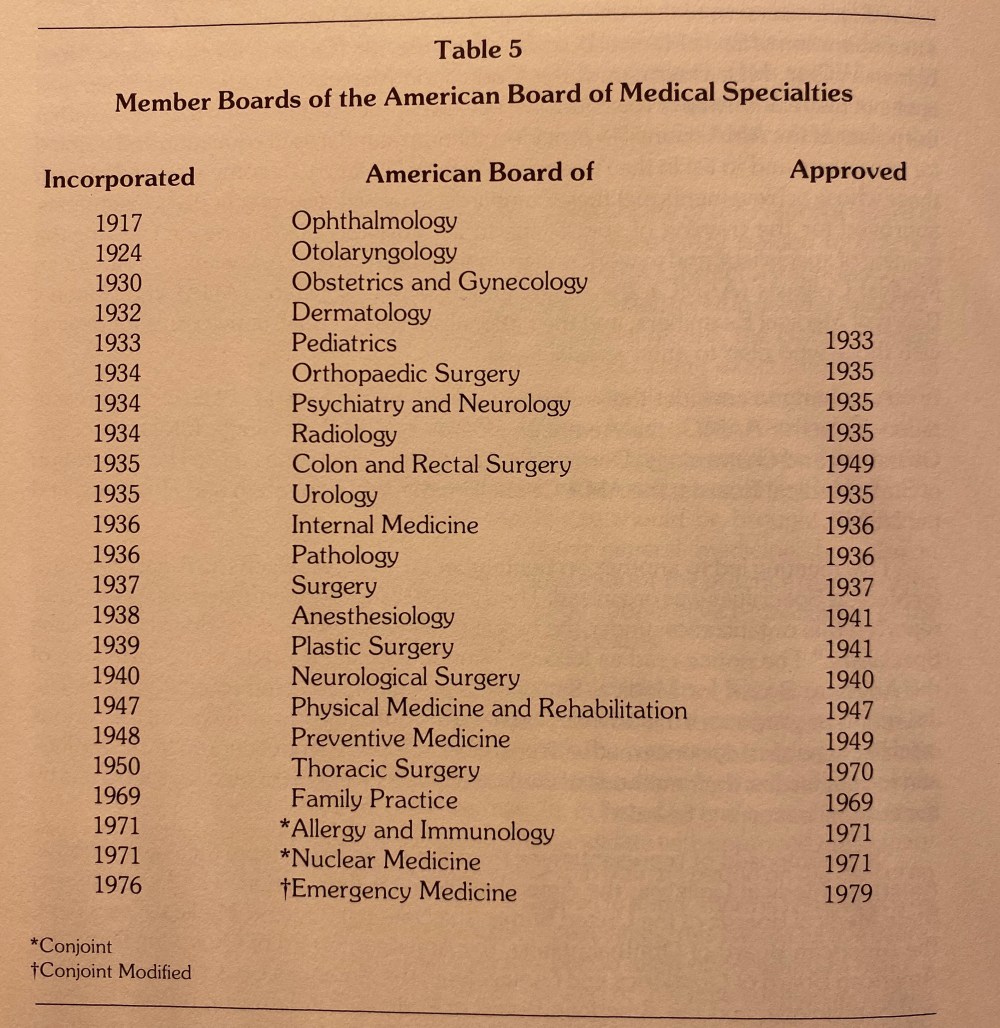

Other specialists soon followed suit. The American Board of Otolaryngology was formed in 1924, and the 1930s ushered in the Golden Age of Specialty Formation, with no fewer than a dozen specialties staking their claims to a piece of medical practice.

The 1930s were the Golden Age for forming medical specialty boards. Taken from The National Board of Medical Examiners: The First Seventy Years.

All of the specialty boards administered examinations to gatekeep the specialty. But they worried that an examination alone might not be sufficient to keep all the charlatans out. So the boards wouldn’t let just anyone take their test. To even have the privilege of sitting for the exam, a candidate had to complete a period of postgraduate residency training.

The solution was ingenious. By requiring residency training, the board guaranteed that any would-be specialist had paid their dues before joining the club. But by requiring passage of the board’s examination, the board kept the ultimate gatekeeping power with themselves rather than ceding that to the hospitals.

The effects of this decision are still relevant today.

What’s past is prologue

Looking back at the origin and evolution of residency training, it’s clear why some problems in the UME to GME transition persist today.

Residency training did not arise a natural outgrowth of undergraduate medical education. The forces that shaped GME were the hospitals and the specialty boards – entities completely separate (and with different motivations) from the academic institutions that ran UME.

Along the way, there was never really a moment when everyone stopped to ask what residency education should be. Was it supposed to be an apprenticeship? On-the-job training? Or should GME be a broader educational experience built upon the UME foundation? Though there have been attempts along the way, we’ve mainly just made it up as we’ve gone along, with individual groups looking out for their own interests.

Maybe it’s time to ask those questions.

Effecting change won’t be easy. It will require buy in from stakeholders whose interests are often divergent. But without concerted leadership to determine what medical education should be – not just UME, but GME and CME – I think we can look forward to more of what we’ve seen in the past.

YOU MIGHT ALSO LIKE:

The Match, Part 1: Why Do We Have a Match?