Recently, the presidents/CEOs of the National Board of Medical Examiners and the Federation of State Medical Boards wrote an invited commentary in Academic Medicine. The piece was in response to an article by medical students highlighting the adverse effects of the “Step 1 Climate” in medical education.

I have criticized several things about the NBME/FSMB response article. But each time I re-read it, it frustrates me anew.

The rhetorical structure and content of the NBME/FSMB response article reads as a defense of a scored USMLE Step 1. While conceding that a pass/fail Step 1 might benefit some U.S. medical students, Drs. Katsufrakis and Chaudhry point out that any change to policy must also consider the perspective of other stakeholders (such as residency program directors, international medical graduates, and students from less prestigious U.S. medical schools).

Here, the presidents of the NBME/FSMB helpfully step in to speak on behalf of each of these stakeholder groups – articulating a perspective, naturally, that goes in support of a scored USMLE. (Does a scored USMLE in fact provide a net benefit to IMGs, under-represented minorities, and graduates from less prestigious medical schools? I’m not confident that it does.)

To me, their argument verges on fear-mongering, suggesting that elimination of a scored Step 1 may lead to a dystopian future in which the tools used for residency selection will be even more expensive, more discriminatory, and might even harm patient safety (since medical students may spend more time on Netflix and Instagram).

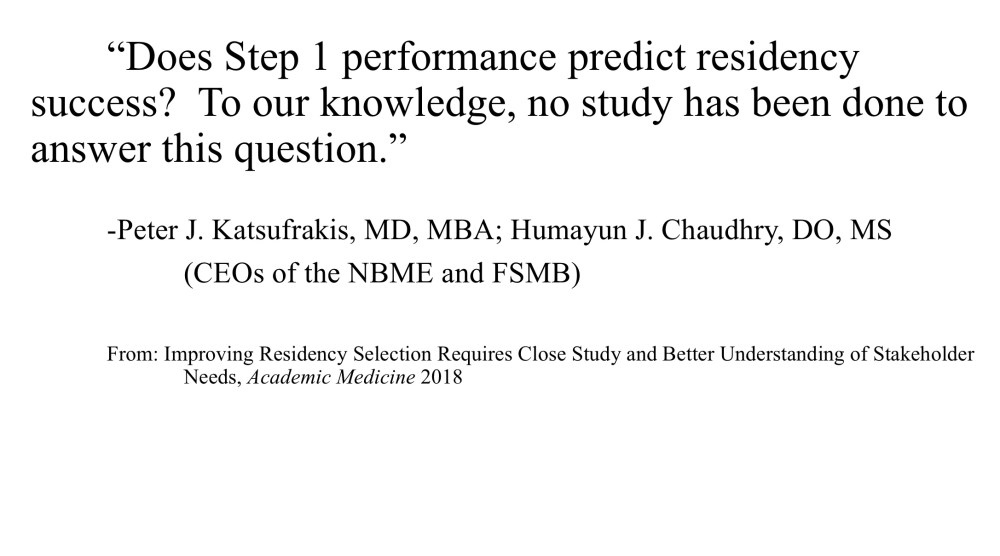

Drs. Katsufrakis and Chaudhry indicate their willingness to consider a pass/fail Step 1 if sufficient evidence were proffered:

If thoughtful, evidence-based analysis by relevant stakeholders identifies a better approach to quantifiable USMLE scoring, we would be open to such an option and would present it to USMLE governance… for consideration.

…before closing with this conclusion (emphasis added).

Policy changes to mitigate such emotional impact should be supported by adequate evidence, solid reasoning, and informed discussion so as not to worsen the status quo. Simply eliminating high quality information presently available during residency application risks worsening the process and desired outcomes.

On the surface, this sounds completely reasonable. As physicians, scientists, and educators, we don’t want to make a change without it being “supported by adequate evidence.” Right?

___

There are at least two problems with the way the argument is framed:

- It sets up an impossible standard. How can we obtain adequate evidence for or against a pass/fail USMLE Step 1 without, you know, having a pass/fail USMLE Step 1 to study? Whether a pass/fail policy is net beneficial or not is a testable question – but to test it requires buy in from the very group arguing against it.

- It supposes that we have adequate evidence in support the status quo. And this, in my opinion, is the bigger issue. In medicine, we demand that a new therapy be proven at least non-inferior to the current standard of care before considering its use. This is sound policy when the standard of care is itself supported by evidence. But is this the case for the USMLE Step 1?

What data support the use of Step 1 scores in residency selection?

Drs. Katsufrakis and Chaudhry directly address this very question in their article.

When pulled from context and read in isolation, this reads as a truthful statement that, yeah, we honestly don’t have any data to support using Step 1 scores in residency selection. You might think that a statement like this would be followed by a call to go out and collect some data so we can all have an informed debate.

Instead, this statement is juxtaposed to a strained argument that although we don’t have any data that Step 1 scores are useful in selecting residents, they are probably still a good thing to use:

“Does Step 1 performance predict residency success? To our knowledge, no study has been done to answer this question. However, performance on Step 1 has been shown to correlate highly with similar licensing exams, and these exams have been correlated with quality metrics of potential interest to secondary score users like residency programs, including cardiac morbidity and mortality [2], likelihood of state board disciplinary action [3], and measures of preventive care and acute and chronic disease management [4]. Thus, performance on Step 1 can provide useful information to inform part of a residency selection decision.”

In case you didn’t follow the logic, the argument is that USMLE Step 1 is correlated with other tests… which are in turn correlated to metrics that matter. Therefore, Step 1 scores must also be correlated with these metrics, and thus also provide “useful information.”

I won’t dwell for long on how this logic might be faulty. Suffice to say that the annals of biomedicine are rife with examples of how just because X correlates with Y, and Y correlates with Z, it does not always follow that X correlates with Z. For now, let’s take these assertions on face value. Let’s pull the references.

Reference #2 – Cardiac morbidity and mortality

This one was a little bit difficult to track down initially, because the article cited doesn’t appear to exist. Here is how reference #2 is cited in the manuscript:

I believe the actual article is this one. (Note the differences in the author list.)

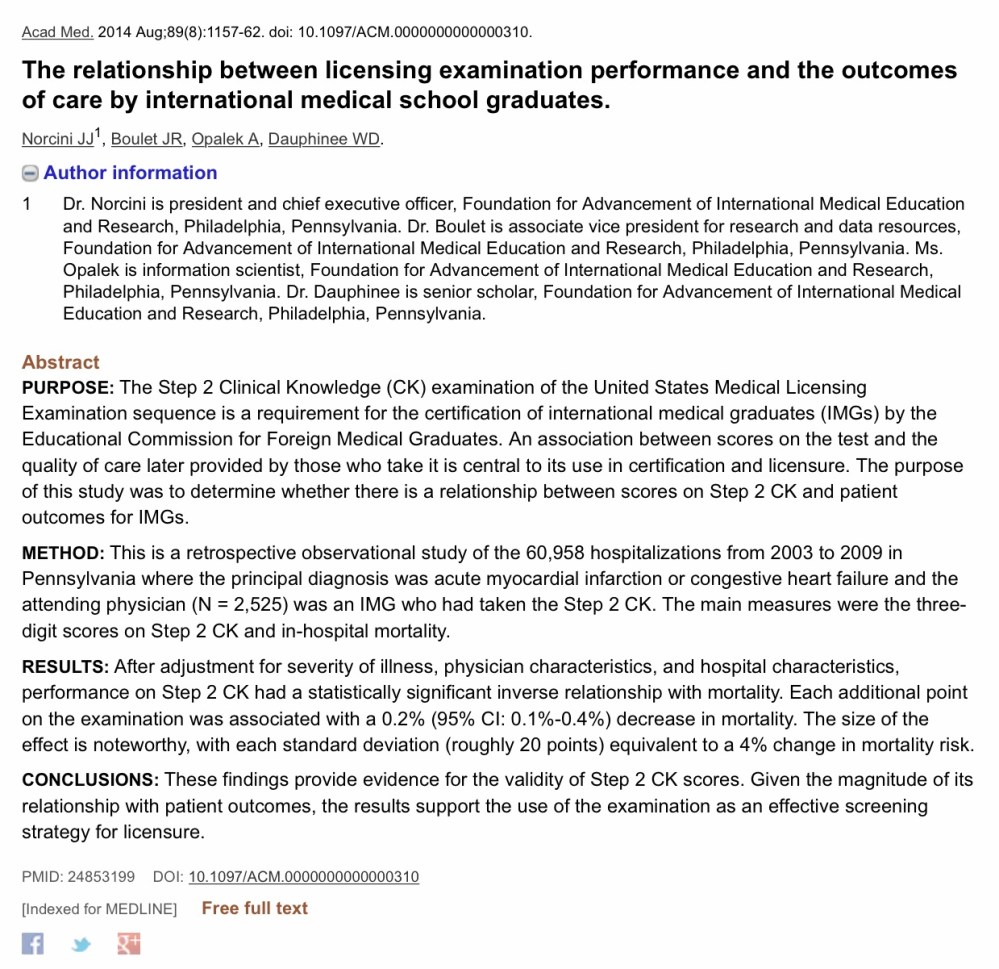

Let’s first highlight a couple of obvious things:

- This is a study of international medical graduates (IMGs) ONLY.

- The factor of interest was USMLE Step 2 CK scores. Step 1 scores were not assessed.

Would these findings hold up in a study of U.S. medical graduates, or with Step 1 as a predictor? I’ll leave it to you to decide if those things can be logically concluded.

Instead, let’s move on to the methods. The authors wanted to associate Step 2 CK scores with in-hospital mortality for two common diagnoses (acute MI and CHF). But studies like this are hard to do, because there are so many things that are associated with in-hospital mortality. You need to control for lots of other variables, and the authors did an admirable job of attempting to do so. Among other things, they adjusted for:

- Illness severity on admission

- Hospital volume

- Physician case volume

- Urban/rural location

Moreover, to ensure a homogenous group, they limited their analyses to only three types of physicians (self-described family physicians, internists, and cardiologists); excluded patients who were transferred to another acute care hospital; and evaluated a group of physicians who had all been in practice for a relatively similar amount of time.

So what did they find?

“Conditioning on a number of patient, physician, and facility characteristics, we found better examination performance was associated with a decrease in patients’ relative risk for mortality. The size of the effect was noteworthy, with each standard deviation (roughly 20 points) equivalent to a 4% change in relative risk.”

Pretty impressive, huh? It is. Until you consider how other factors influenced the risk of hospital mortality.

For instance, what group of physicians studied (family physicians, internists, or cardiologists) would you expect to demonstrate the lowest relative risk of in hospital mortality? If you’re like me, you might suspect that, all other things being equal, cardiologists would probably do a better job.

Wrong.

After the statistical adjustments described above, the authors found that board certified and self-designated family physicians had a 37% decrease in risk for mortality when caring for patients with MI or CHF. Patients whose care was provided by an internist enjoyed a 27% decrease in mortality risk.

Hmmm…

On the basis of these data, I think we must also accept one of the following additional conclusions:

- All hospitalized patients with acute MI or CHF in Pennsylvania should be managed by IMG family physicians.

- To reduce in-hospital mortality, cardiology fellowship training for IMGs should be eliminated.

- Experience acquired during cardiology training is inversely related to patient outcomes; fellowship programs should instead provide a Step 2 CK refresher course to attenuate this in the future.

- Despite an honest try attempt at statistical adjustment, residual confounding is present in this study that makes it difficult or impossible to correlate mortality with physician factors of interest.

I’m going to go with #4.

—

Reference #3 – Likelihood of state board disciplinary action

This one is online here:

Unlike reference #2, this study did assess Step 1 scores. In a multivariable model adjusting for female gender and years since medical school graduation, Step 1 scores were associated with decreased odds for disciplinary action (OR for 1 SD unit increase: 0.78; 95% CI 0.75-0.82). The thing was, once you add Step 2 CK scores to the model, Step 1 scores were not associated with disciplinary action (OR 0.97; 95% CI 0.91-1.04).

Here, I think it is fair to conclude that USMLE scores are associated with a modest effect on likelihood of subsequent state board disciplinary action. But it doesn’t seem that Step 1 scores add anything if Step 2 scores are known.

More importantly, the effect magnitude is small. The overall probability disciplinary action was low – so even a significant reduction in odds doesn’t translate to much in real terms. For instance, a physician with a Step 2 CK score of 190 (1 SD below the mean for the study participants) had an overall risk for disciplinary action of 1.2%. A physician with a Step 2 CK of 236 (1 SD above the mean) has a risk of 0.8%.

We can extrapolating those figures a bit using a ‘number needed to treat’ type of thinking. Suppose that you are a residency program director who wanted to prevent the entry to your program of one additional resident who will go on to have a board action against him/her. You will need to match 250 applicants with USMLE Step 2 CK scores that are two SD above the group you don’t match. So it may work, but it’s gonna take you a while.

For me, the bottom line for this article is not that the science is faulty – it’s just that USMLE scores are a very inefficient way to predict an outcome like disciplinary action. (In fact, there is already literature demonstrating that by far the most important predictor of disciplinary action is unprofessional behavior in medical school.)

Plus, let’s be honest with ourselves: the reason that Dermatology and Orthopedic Surgery programs use USMLE Step 1 scores in residency selection is not to weed out candidates who are going to have a board action against them.

___

Reference #4 – Measures of preventive care and acute/chronic disease management

This comes from an almost 20 year old paper in JAMA, which is online here:

This paper finds higher measures of care quality among family physicians who had higher scores on the Quebec family medicine certification exam (QLEX) from 1990 to 1993.

So what does that have to do with using USMLE Step 1 scores for residency selection in 2019?

I honestly haven’t a clue. Presumably, there are data suggesting that Step 1 scores are associated with scores on the QLEX, but when I enter these terms into PubMed, here is what returns:

___

So those are the references hiding behind the superscript. Do they support use of USMLE Step 1 in residency selection as being an evidence-based “standard of care” from which we should not deviate on the basis of current data? Or does it represent an attempt to stifle debate through tenuous logic and a play on scientific authority? You can be the judge.