Recently, I commented on whether USMLE Step 1 scores serve to “level the playing field” for applicants from lower-tier medical schools, as is often claimed. (If you missed that, you can catch up here.)

Today, I’d like to consider another claim made by the sponsors of the USMLE – that the objectivity of the Step 1 score serves as “an antidote to implicit bias.”

Yes, this was recently asserted by the presidents of the National Board of Medical Examiners and Federation of State Medical Boards in their recent invited commentary in Academic Medicine. Drs. Katsufrakis and Chaudhry are quoted in relevant part below, with my emphasis added.

“Research demonstrates some differences in USMLE scores attributable to race and ethnicity, with self-identified Black, Asian, and Hispanic examinees showing score differences when compared with self-identified White examinees [6]. Some cite this as evidence in support of eliminating Step 1 scores, at least for residency selection. However, the majority of these observed differences disappear when controlling for undergraduate grade point average and Medical College Admission Test scores. The presence of a national, standardized, objective measure such as the Step 1 score may serve as an antidote to implicit bias, counteracting some of the subjectivity inherent in evaluating other aspects of an applicant’s record.”

The argument

Did you follow their logic? The argument seems to be that:

- Minority test-takers have lower USMLE Step 1 scores

- Statistical adjustment for GPA/MCAT reduces the magnitude of these differences

- Therefore, USMLE scores are not biased, and minority candidates are less disadvantaged by using Step 1 scores for selection than they would be by subjective evaluation of other parts of their record

To me, #3 seems to be a curious conclusion to draw from statements #1 and #2. Just because an instrument is national, standardized, and objective doesn’t mean it’s free from bias. And should we really be reassured by the fact that some of the differences in USMLE scores are explained by MCAT and GPA?

___

Let’s dig into this a little more. To start, as we’ve done in the past, let’s pull the reference.

Here is the article cited above:

Before we review the key findings, let me point out a few things to to put the results in context.

- Racial background was self-reported by the test-taker.

- These are U.S. and Canadian medical graduates (no IMGs). Thus, the racial background reported should be interpreted in the cultural context of those countries.

- The data are contemporary – participants are took the USMLE after 2010.

- The paper’s authors all work for the NBME – an organization that has a financial interest in the findings being reported in a positive way. Remember, in any scientific analysis, there is some room for discretion (in what data to report, wha analyses to run, what variables to control, etc.). And here, I think we can assume that the authors would exercise that discretion in a way that paints their organization and their products in the most favorable light. Put another way, it’s hard to imagine the NBME would let a paper leave their shop if the conclusion reached was that their signature product (the USMLE) was racially biased.

Key findings: race and USMLE Step 1

So how do Step 1 scores vary by the test-taker’s racial background?

Interestingly, the authors chose not to report the unadjusted mean Step scores by racial background. The closest we get are these data in Table 2, which come from their multivariable regression models.

The “demographics model” at left adjusts for age at first Step 1 attempt, U.S. citizenship status, and English-as-a-second-language status. As you can see from the coefficients column, self-reported black test-takers scored 16.5 points lower on Step 1, and self-reported Hispanic test takers scored 12.1 points lower the the white reference group.

The authors then add undergraduate GPA and MCAT into the model. This is the “covariates model” shown in the right hand side of Table 2. After doing this, in the words of Drs. Katsufrakis and Chaudrhy, “the majority of these observed differences disappear.” Specifically, the difference in USMLE score between black/Hispanic and white test-takers was reduced to only ~5 points – though it remained statistically significant.

You may be wondering what this multivariable model really tells us. If so, hold that thought – we’ll be coming back to this in a moment. But first, let’s discuss this:

Are the differences in Step 1 score by racial background meaningful?

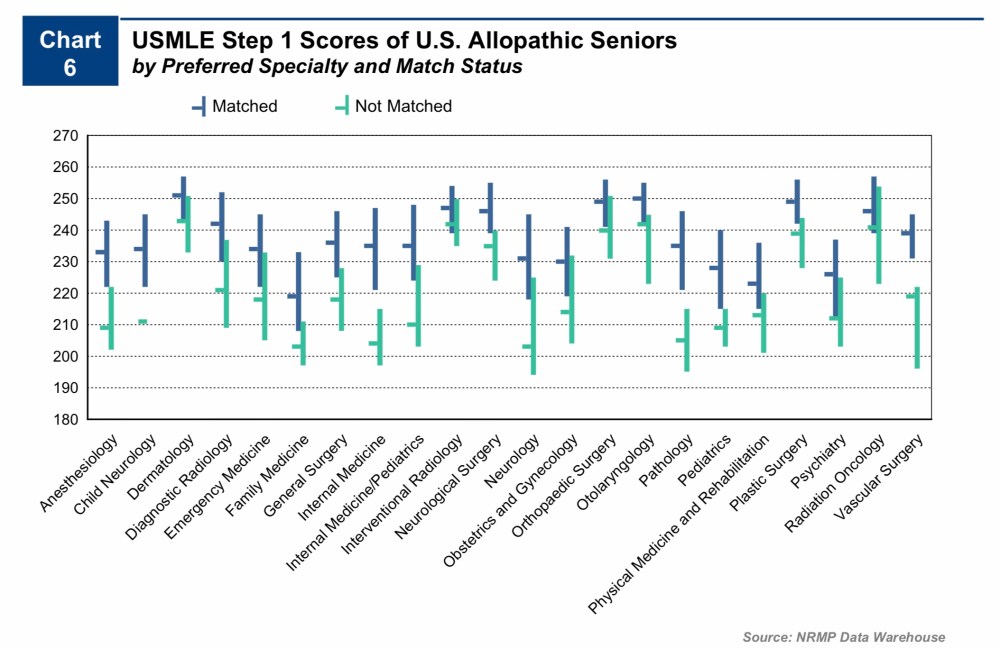

Considering the effect magnitude, it’s hard to argue they aren’t. The difference between black and white test takers is nearly one standard deviation (~20 points). And if we look again at the NRMP data, the mean difference in USMLE Step 1 scores between candidates who match and candidates who don’t is often less than 12-16 points – especially in the most competitive fields.

Even in internal medicine – the discipline that offers the greatest number of residency positions – the reduction in Step 1 score translates to fewer interviews for black applicants when programs use screening cutoffs.

The importance of findings like these should not be understated. Remember, when USMLE scores are reported to program directors, they aren’t reported after some kind of multivariable adjustment. It’s just a three-digit number.

Does the USMLE reduce bias toward under-represented minorities in medicine – or simply play it forward?

The answer may depend in large part about how you feel about the statistical adjustment performed described above.

Why did Rubright et al. adjust their analyses for undergraduate GPA and MCAT? Those data aren’t typically available to residency program directors, so expecting that a similar adjustment would mitigate the effect size in real life is silly.

The answer, I think, has more to do with logic than it does mathematics.

If we accept that MCAT and undergraduate GPA are unbiased measurements of academic quality, then adjusting for them allows us to conclude that most of the differences in USMLE score by racial background are related to pre-existing differences in these applicants. In other words, the problem isn’t the USMLE – it’s the test takers! See! They had lower MCAT scores and GPAs coming in! How can we possibly expect them to perform as well as a more accomplished group on an unbiased instrument like the USMLE?

This seems to be the conclusion reached by all of the NBME authors. However, there is another conclusion that could be reached from the same data/model. For instance, if we do not accept that the MCAT and undergraduate GPA are unbiased measures of academic potential, then their known correlation with USMLE scores becomes a bit more vexing. After all, it could be that all three of these measures are to some extent measuring the same thing (i.e., societal advantage).

To support the NBME’s interpretation, Rubright et al. make this assertion:

“MCAT scores themselves have not shown evidence of bias against underrepresented minority test takers. [31]”

___

Is the MCAT an unbiased instrument?

The reference noted above (#31) is this paper:

The paper includes lots of interesting data and thoughtful analysis, and is worth a read. But for our purposes here, I’d like to highlight two things:

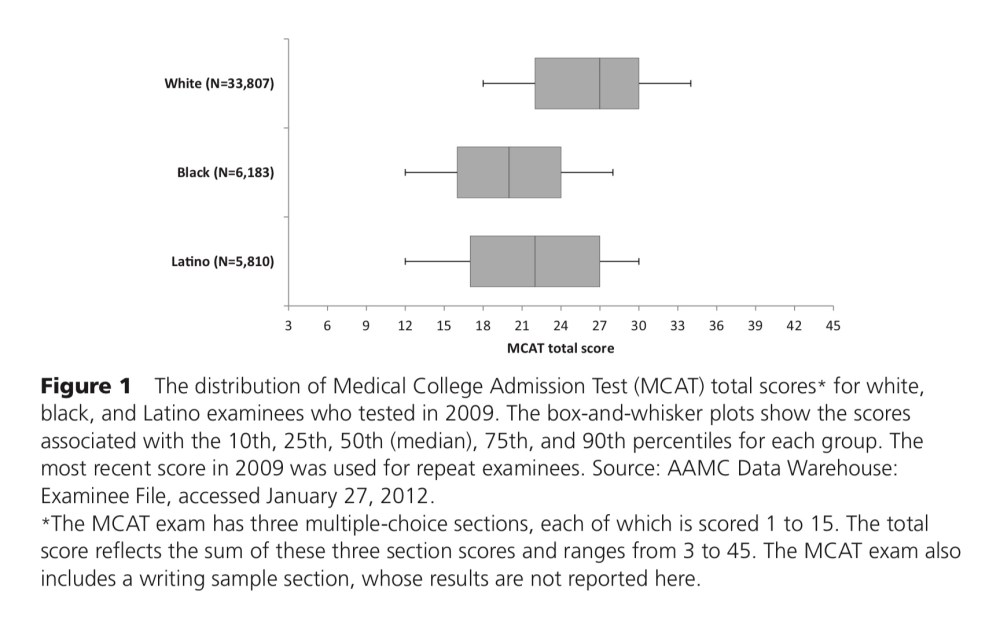

First, just as with USMLE scores, minority test-takers for the MCAT did have lower scores overall:

Second, here is how the authors sought evidence of test bias (emphasis added).

Second, here is how the authors sought evidence of test bias (emphasis added).

“Differential prediction” analysis is used to examine whether a given MCAT score forecasts the same level of future performance regardless of the examinee’s race or ethnicity. If the MCAT exam predicts success in medical school in a comparable fashion for different racial and ethnic groups, medical students with the same MCAT score will, on average, achieve the same outcomes regardless of racial or ethnic background. On the other hand, if their outcomes differ significantly, test bias in the form of differential prediction exists because the prediction will be more accurate for some groups than for others.”

So how did they measure success in medical school?

By using graduation rates… and performance on the USMLE.

—

At this point, I could point out the circular logic here: USMLE scores aren’t biased because they’re explained by MCAT scores. And MCAT scores aren’t biased because they predict the USMLE.

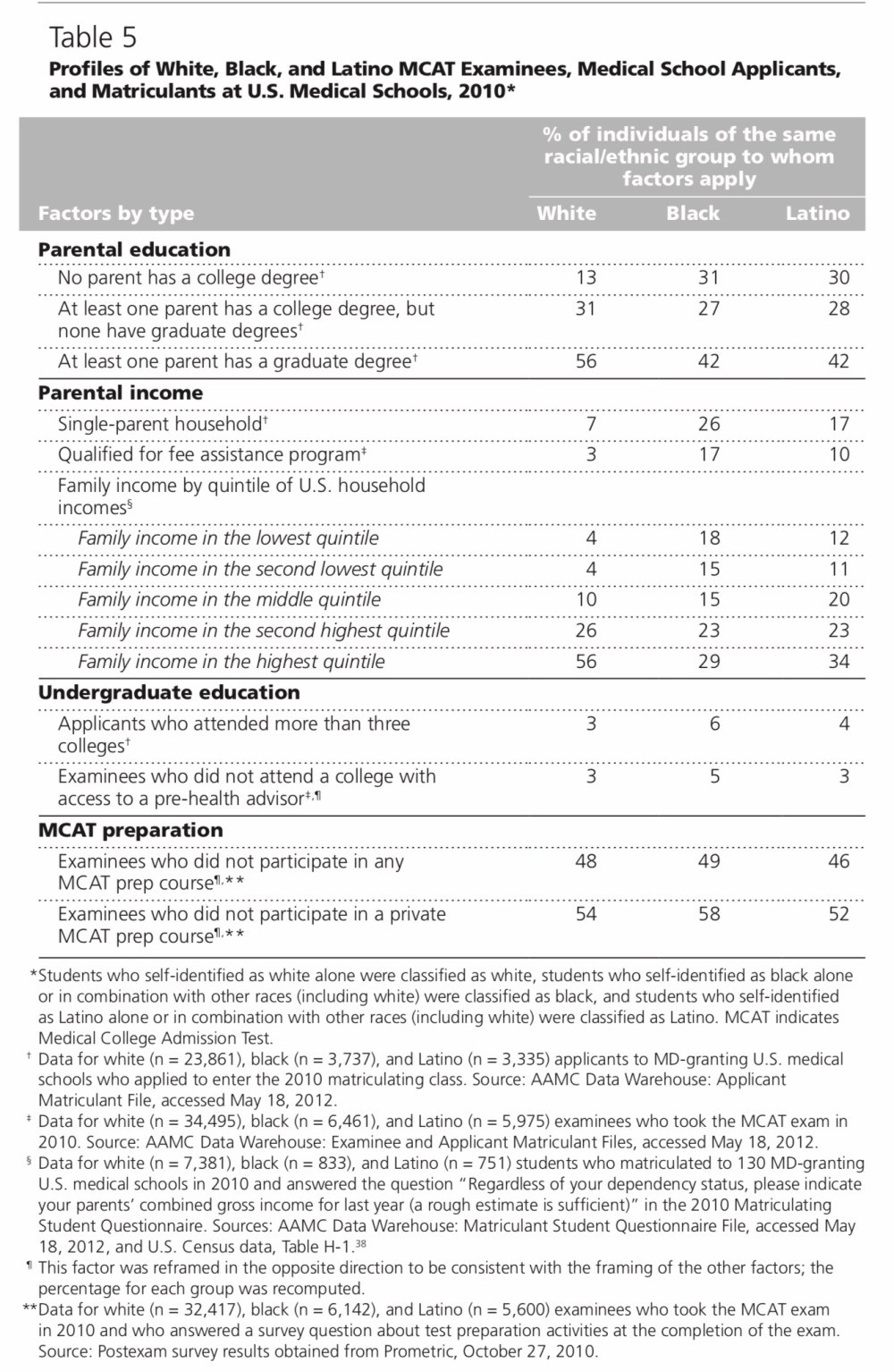

However, there are other points in this paper that deserve some attention. Here, for instance, are data demonstrating significant differences in both MCAT scores and parental income by racial background among prospective medical students.

What are these tests really measuring?

So if it’s true that socioeconomic differences are responsible for racial differences in MCAT scores… and MCAT scores are responsible for racial differences on the USMLE… then maybe Drs. Katsufrakis and Chaudhry should be little less reassured that so much of the difference in USMLE is “explained” by the MCAT. Surely, there is no sound argument to be made for selecting residents based on socioeconomic status. Maybe we should instead regard the differences in MCAT and USMLE as reflecting structural racism that begins well before a student even thinks of applying to medical school.

___

After working through this, here are my own takeaways:

- Just because a measurement tool is standardized or objective doesn’t mean it’s free from bias.

- To the extent that differences in USMLE score by racial background exist, they are detrimental to minority candidates when scores are used for residency selection. (Remember, program directors are reviewing only raw, three-digit data.)

- MCAT and USMLE scores may not be measuring only what we think they are – and are likely biased by factors that have no relationship with physician quality or patient care.

- I don’t doubt for a minute that implicit (and explicit) racial bias enters into residency selection in a number of areas – but it remains difficult for me to believe that using Step 1 serves as an antidote for much of anything.

YOU MIGHT ALSO LIKE:

USMLE Step 1: Leveling the playing field – or perpetuating disadvantage?

A peek inside the sausage factory: setting the USMLE minimum passing score