Recently, I pointed out USMLE Step 1 score creep – the steady improvement in Step 1 scores over time. My ‘manuscript’ was intended to be tongue-in-cheek. The goal was to propose a conclusion so laughably preposterous that it would make you stop and think, hey, why is it that Step 1 scores should be rising? I hoped that the winking smiley face emoji would be a tip-off that satire awaited, but judging by some of the feedback I’ve received, many readers missed the joke.

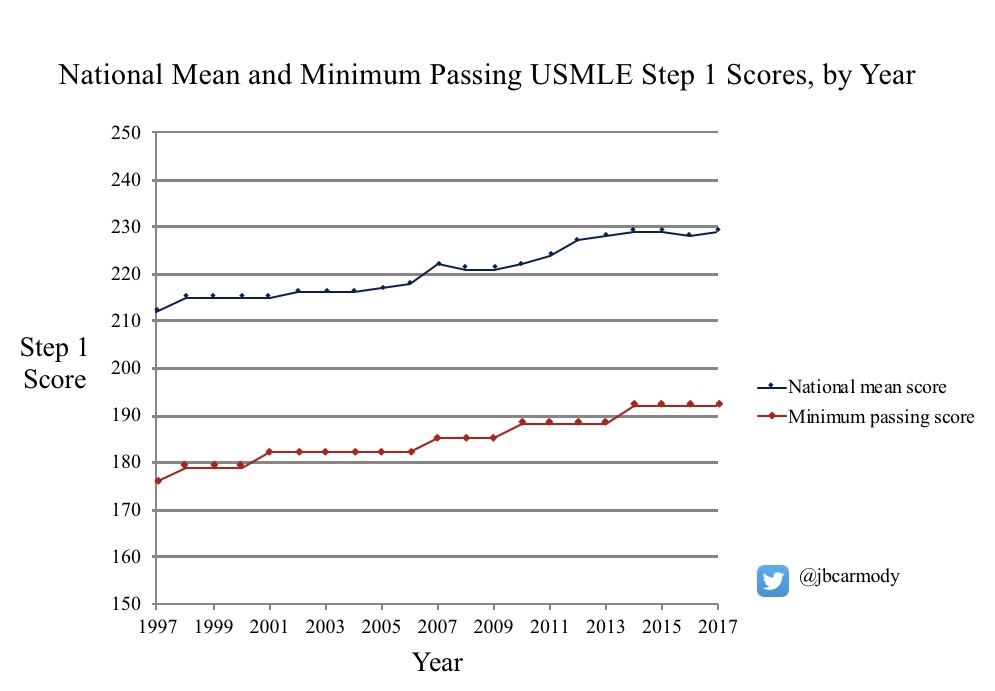

So, one more time, with tongue-completely-removed-from-cheek, let me show the key graphic, and see what you think:

- When I look at the blue line, I actually do not think that medical students today are any smarter than those 10-20 years ago. Instead, I conclude that they are squeezed more – by the intense pressure to achiever higher and higher scores on Step 1 for residency selection.

- When I look at the red line, I don’t see the orchestrations of a carefully-calibrated QI effort. I see a moving target, imposed by an organization racing to keep up with improving student performance, and driven by the desire to protect the public acceptance and relevance of their product.

So those are my real conclusions. You can – and should – make your own. But as you do, to provide the appropriate context, it may be helpful to answer one very important question: how is the USMLE Step 1 minimum passing score determined?

Answering this question gets a little messy. You’ll have to stick with me through a review of testing terminology and a dive into NBME history. But let’s do it. Come with me as we take a peek into the window of the USMLE sausage factory and see how the Step 1 minimum passing score gets set.

On test scores and reference points

The USMLE is designed as a criterion-referenced test – meaning that examinee performance on the test is rated against an objective standard. Think, for instance, of the test you would take to get your driver’s license. To pass, you have to demonstrate that you possess certain skills and knowledge about the operation of a motor vehicle that exceed an objective threshold deemed necessary to drive safely.

That stands in contrast to a norm-referenced test. For a norm-referenced test, the intention is to compare the performance of one examinee relative to another. Think, for instance, of the SAT or ACT. The measuring stick isn’t set against some central core of knowledge; the test simply measures how examinees stack up against each other.

A (very brief) history of NBME exams

The original NBME exam was administered in October 1916. It was a veritable circus of assessment, taking place over 5 days, and including examinations in 10 subject areas. Each subject area was graded on a 100 point scale, with an overall score of 75% needed to pass.

Among the varied tasks required were these:

- Examination of three patients at the local hospital, with 80 minutes to take a history and physical, then “stand an oral examination on the clinical history and diagnostic conclusions”

- Demonstration of the appropriate incision used for shoulder amputation using a living model

- Injection of unknown solutions (such as strychnine or caffeine) into frogs, with observation and description of results

- Written pharmacology examination including the following essay question: “What is the essential element in dried thyroid gland? Describe its action when given in therapeutic doses. Name three conditions in which it is used as a therapeutic agent.”

- Essays on hygiene, including such topics as, “Name the diseases which may be conveyed by meat,” and “Describe a sanitary privy.”

By modern standards, this exam was a killer: only 50% of the ten individuals who participated in this exam received passing scores.

In search of objectivity

In the decades that followed, the format of NBME exams evolved, but the tests were still based upon oral and essay components. Grading tests in this way, however, raised questions of objectivity. Multiple-choice questions (MCQs) promised improved reliability over the inherent challenges involved in objectively evaluating, say, an examinee’s description of a sanitary privy.

In 1954, the NBME unveiled a new test, based entirely on multiple-choice questions (MCQs). These new, MCQ-based NBME Parts I and II exams reported scores as a two-digit number (up to 100), and just as on the original exam, applicants needed a score of 75 to pass.

However, this two-digit score was often misinterpreted by students, who often thought that it represented either percentage of questions they got right, or their percentile score. It reflected neither. The NBME MCQ exam was a norm-referenced test: the score simply represented how applicants performed relative to each other. The passing standard had to be set arbitrarily.

So how did the NBME determine where to set the standard for their new MCQ test? By looking at the pass rate of the old exam.

Under the old essay/oral examination system, around 86% of test-takers passed the initial certifying exam. Therefore, when NBME Part I began, the test was centered so that a score of 75 would result in a similar overall pass rate.

Setting a criterion-referenced standard

The norm-referenced NBME Parts I and II continued until the early 1990s. At that point, under threat of legislative action, the tests were reworked into the new, “comprehensive” NBME exam. Unlike its predecessor, the new comprehensive NBME exam would be criterion-referenced.

Making a new criterion-referenced test is a daunting task. What is the best way to determine what things all doctors should know?

The NBME chose to use a variation of the Angoff method. Very generally, this involves conducting structured interviews with content experts to determine the percentage of items that a minimally-competent examinee would answer correctly. (I wrote a little bit more about this method – with an example of what Angoff data look like – here.)

While the Angoff method and its variants have been criticized for a number of reasons – in particular, judges’ ratings are likely to be influenced by information they receive about actual exam performance – this general methodology is used to set standards for a number of criterion-referenced standardized tests.

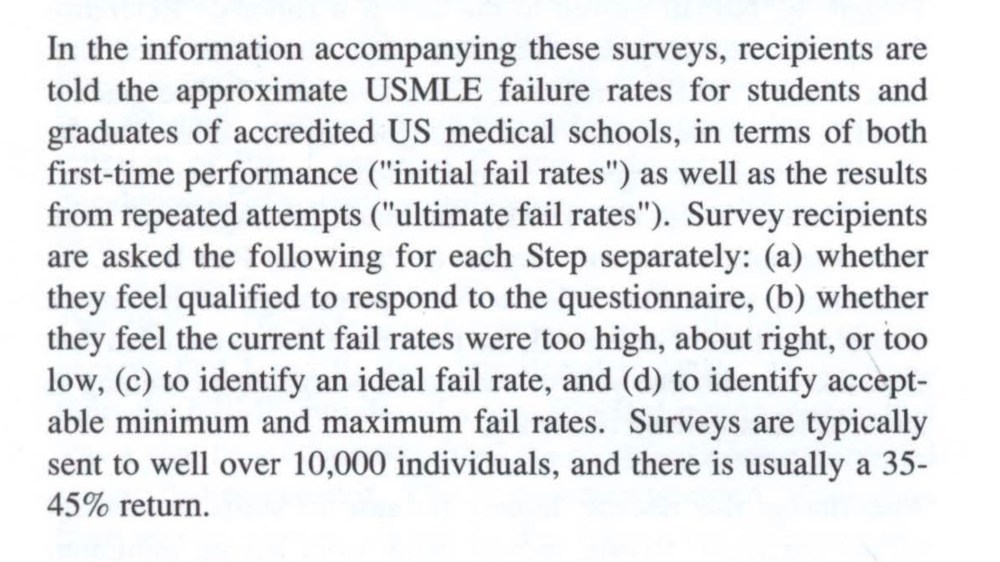

However, in setting the minimum passing standard, the NBME chose to use information beyond just the opinions of content experts when determining the minimum passing standard. In particular, they also chose to consider input from a survey of state licensing boards, medical students, and faculty. These folks were asked whether the existing passing standard seemed too high, too low, or “about right.”

Wait… what?

Let’s think about this second information stream – the survey – for just a moment.

Why include this piece of information in setting the standard? Why not just ask experts what information doctors should know, and go with that?

You could argue with a straight face that the information from the survey is both valuable and necessary. After all, aren’t licensing boards in the best position to determine whether incompetent candidates are passing the current test, and standards should be raised? Faculty might similarly have some perspective on that issue as well.

But if this is the case, why survey medical students? What insight would they be expected to have on whether the standard was too high/low?

In my opinion, the real answer has more to do with the business of the NBME than anything else.

Suppose the new criterion-referenced NBME test came out, but had a dramatically different passing rate than the old norm-referenced test. Suppose that instead of 86% of students, only 50% passed. What would happen then? At best, the validity of of the test would fall into question. And at worst, the market may come up with an alternative to such a test – which would threaten the NBME’s entire enterprise.

Remember, by this point, an MCQ-based NBME exam had been in existence for decades, with broad-based uptake by medical students and licensing boards. People in medicine had gotten used to the idea that a pass rate around 86% for the initial licensing exam seemed about right. If you make your living as a peddler of test instruments, why mess with a winning formula?

Therefore, if you’re the NBME, instead of just asking content experts to set an unbiased criterion-referenced standard, you create a multi-input process to give yourself a little bit of wiggle room and ensure the end product comes out in a form that “looks right”, and that the marketplace will accept. By using such a process, the NBME soothingly assured its stakeholders that “a major shift in pass-fail rates is considered unlikely” with the new test (as noted in the 1990 NBME Examiner newsletter).

And thus it came to pass that the passing rates for the new, criterion-referenced comprehensive NBME Part I exam were essentially identical to the pass rate for the old, norm-referenced, non-comprehensive NBME Part I. In 1991, the last year of the comprehensive NBME Part 1 exam, the overall pass rate was 85%.

The USMLE is born

The USMLE was created following a coordinated national effort to make a single testing pathway for medical licensure. (While most U.S. medical students took the NBME tests, some U.S. students and all foreign medical graduates took a different test – the Federation Licensing Exam, or FLEX.) In the horse-trading that followed between the NBME and FSMB (who sponsored the FLEX), the USMLE was born. Its first two parts – Steps 1 and 2 – would be based on the comprehensive NBME Parts I and II, and administered by the NBME. Step 3 would be based on the FLEX, and administered primarily by the FSMB.

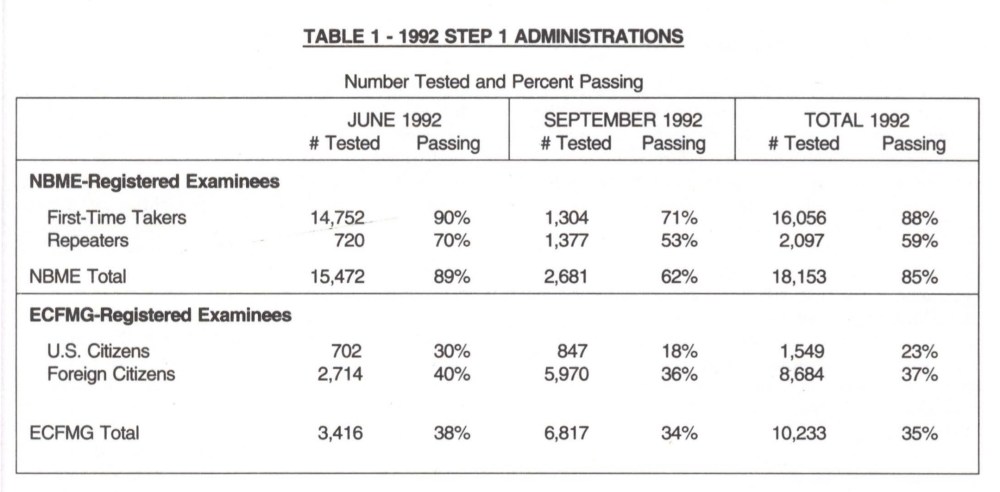

The first USMLE test-takers took the exam in June 1992. Here’s how they did:

Note that these data are from a pre-computer testing era, when the test was only administered on certain dates – so many of the September test-takers were repeaters from previous exams. The important number here is in the right-hand column for the NBME-registered examinees (i.e., U.S./Canadian medical school graduates). Note that, on the first iteration of USMLE Step 1, 85% of test takers passed the exam.

—

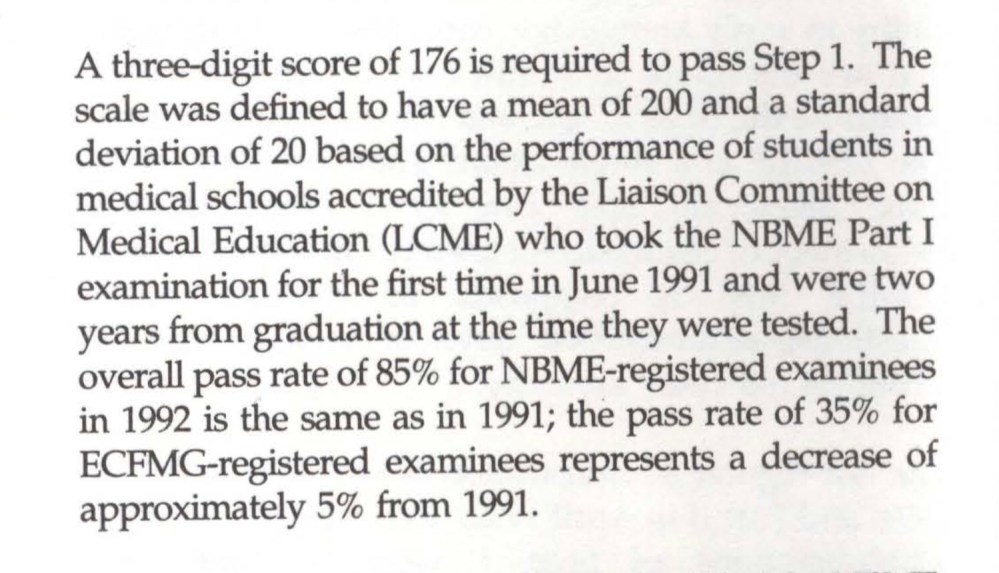

Did you catch that? If not, here’s another description of data from the first USMLE:

That’s right, the pass rate for the USMLE Step 1 was exactly the same (85%) as it was on its predecessor, the comprehensive NBME Part I (85%).

It is true that the USMLE Step 1 as a test instrument is a direct descendant of the NBME Part I exam – so we should expect that students would perform similarly. But remember also that the test-makers have a incentive to maintain the same or similar pass rate for the reasons mentioned above.

Let’s summarize

In summary: the original USMLE pass rate was based on the pass rate for the comprehensive NBME exam… which was in turn based on the pass rate on the norm-referenced NBME MCQ exam… which was itself based on the pass rate from the 1940s era NBME oral/essay tests. A pass rate of 85-86% was what the market would accept, and so that’s exactly what the NBME delivered for 75 years.

Increasing scores, increasing standards

You already know what happens next. As highlighted in the clipping above, the original passing score for the USMLE Step 1 was 176, and the pass rate was 85%. But with a single licensing exam for all candidates, the USMLE now provided a common measuring stick that residency programs could use to evaluate all candidates. Even better, that measuring stick was objective and criterion-referenced. Why not use it to help pick the best candidates?

Compounding this was a mismatch between the number of residency positions offered in the Match and the number of applicants for those positions. In 1992, for instance, there were around 20,000 PGY-1 positions offered in the Match, and around 23,000 applicants. By the late 1990s, however, the number of applicants had risen to over 35,000, versus just 21,000 matched positions.

As candidates began applying to more programs and the Step 1 score became more valuable currency in residency selection, the mean national score began rising. By the year 2000, only 8 years after the test began, the national mean USMLE score was 215 (75% of one standard deviation from its original mean), and test-making authorities had already begun their policy of gradually ratcheting up the minimum passing score.

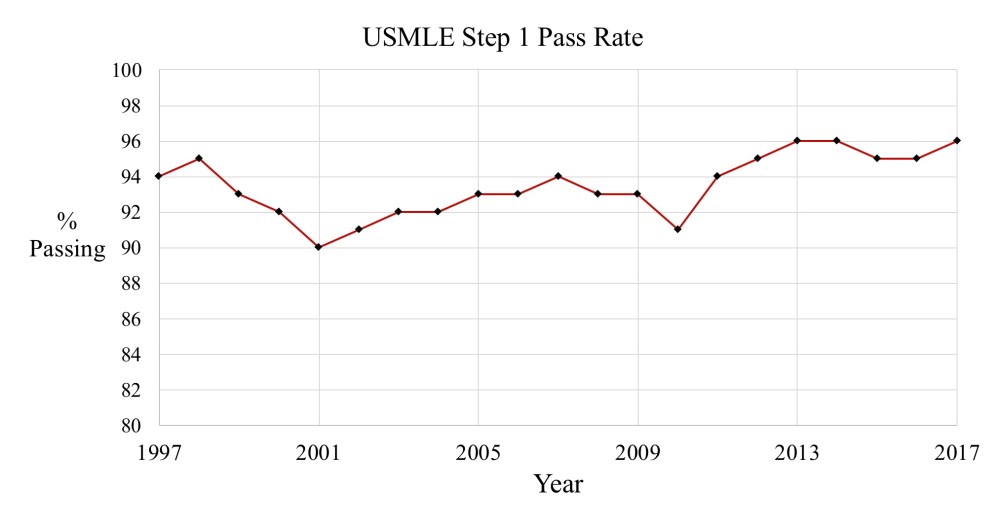

Therefore, while the pass rate increased initially, pass rates from 1997 onward have been stable, ranging from 90-96%, and reflecting the battle between students’ ever-increasing scores and the USMLE’s increasingly higher standards:

The business of the NBME

I have been critical in the past regarding financial conflict of interest at the NBME.

When I point this out, I don’t mean to suggest that the people who work there are corrupt. I think that they are people who believe in their organization’s mission.

But I also think that the people who work for the NBME are regular people, not altruistic demigods bestowed upon the world to bring us better psychometrics. And as regular people, they are motivated by regular things. Things like keeping your job; making your boss happy; getting promoted; acquiring power and influence; and making a better salary.

Further, I think that the people at the NBME intelligent people, who are adept in data evaluation and clever in business strategy and who are altogether quite capable of steering an organizational course that protects their self interest. They are not going to deliberately choose policies that destabilize their jobs or salaries or power.

Quite frankly, the NBME is in the business of selling tests. So when we evaluate their policies, we need to evaluate them through that lens.

Why adjust the minimum passing score?

Viewed in this context, it is perfectly clear why the NBME would continuously raise the passing score for their test. It’s good business.

In theory, there is no problem with a criterion-referenced test that everyone passes. I mean, if everyone who takes the test has the minimum competency, why shouldn’t everyone pass?

But in practice, things look a little different. Because if you charge students $600 to take a test that they all pass, pretty soon they’re going to start asking why they have to take it in the first place. (Don’t believe me? Look no further than the USMLE Step 2 CS experience. Note in particular how calls to eliminate the test for U.S. medical graduates – who passed the test at a 98% rate – were answered by a policy change to decrease to the overall pass rate.)

Maintaining a relatively constant pass rate is common sense when you are selling a test that consumers accept as being valid in its current form.

And the easiest way to know if customers think the current pass rate is appropriate is to do a little market research: just ask your customers whether they think the current pass rate is “about right” or not. Even better, you can anchor their impression by providing the current pass rate, and gauge their opinion on what the “ideal fail rate” on your test should be.

(As a sidenote, how could anyone possibly know what the “ideal fail rate” for a criterion-referenced test should be? Isn’t the only correct answer, “it depends”? Including survey data like that when setting a passing standard has everything to do with maintaining marketshare, and nothing to do with protecting the public.)

Here, for example, is a description of the survey procedure used for a recent USMLE Step 1 minimum passing score review.

So you decide. What’s at the root of USMLE score creep? Needed increases in minimum passing standards? Or a transparent attempt to maintain face validity and market share of a product – by the very company who produces that product?