Lately, I’ve spent quite a bit of time highlighting problems with the USMLE Step 1. Now it’s time to talk solutions.

So if you turned the test over to me, here’s what I’m going to do:

1. Make USMLE Step 1 Pass/Fail.

The original intent of the USMLE was to provide a binary decision on a candidate’s appropriateness for licensure. The test was not designed to distinguish among test-takers at the higher end of the scale, nor was it ever intended as an instrument to assess a student’s capability to perform in residency.

In fact, the old NBME Part I and II exams – the forefathers of USMLE Steps 1 and 2 – used to provide this frank disclaimer:

So the first thing we’re going to do is use the test as it was intended. Gone are three digit scores. You either possess the requisite capability for licensure, or you don’t.

2. Set a valid standard.

The USMLE is a criterion-referenced test – meaning that the performance of test-takers is measured against an objective standard. The problem is, the process used to set that standard is not as objective as you might hope, and the standard that can be manipulated to maintain an overall pass rate that the marketplace has come to expect. We need a standard that is valid and defensible.

So here’s how we’re gonna set the new standard.

First, we will let the USMLE Management Committee choose a group of exemplar physicians – doctors who represent, in one way or another, the best a doctor can be. They should come from all specialties and career paths and stages.

Then, without any special preparation, these physicians will take the USMLE Step 1 in its current form.

Questions that >50% of the exemplar physicians know get to stay. Questions that fewer than half of our best and brightest practicing physicians answer correctly are out.

My expectation is that the number of possible USMLE Step 1 questions is going to go way down. But is that really a problem?

If it is empirically true that the majority of our very best doctors do not recall that the Philadelphia chromosome is a (9;22) translocation, or that the ligamentum venosum is derived from the ductus venosus, then perhaps we should conclude that these tidbits of knowledge are not essential ones that all doctors need to be toting around in their heads for the rest of their careers.

To set the passing standard, we can look at the distribution of scores for the ‘experts’ on the included questions, and set a threshold for passing that would meet or exceed at least the majority (if not 68% or 95%) of the scores of the exemplar physicians.

Do you think that’s too high of a bar? I’ll bet it’s not. I suspect that almost all of the students who pass Step 1 currently would outperform almost all of the practicing physicians on this basic science test.

At the very least, we’d have a transparent and common sense standard. It is hard to argue that a candidate lacks the basic material to become licensed as a physician if he or she knows as much relevant basic science as our very best licensed physicians do.

Time to implementation:

Three years.

This will give students, educators, and residency program directors time to adjust to the new system.

__

Wow, I finished that a little quicker than I anticipated. Since I have a little time left, I’ll go ahead and take some questions from the audience – just to save you a little time in the comments section.

__

Q: I’m an educator. Your plan doesn’t get at the root of the real problems in medical education. Why are you proposing that we continue to use a single multiple-choice question test to assess knowledge in 2019?

Agreed. Maybe I should have titled my plan as “improvements” rather than “solutions.” The two steps mentioned above won’t fix every problem in medical education (or residency selection, or physician licensure, etc.). But these are rapidly-implementable steps that would at least stop some of the bleeding.

__

Q: I’m a student from a less prestigious (or international) medical school. Without a scored USMLE, how will I get into a competitive residency?

First, I am skeptical of the claims that a scored USMLE “levels the playing field” so-called lower-tier medical school applicants or IMGs. Some individuals benefit, but on the whole, I think the current system is more likely to perpetuate disadvantage.

Second, I do not support a pass/fail USMLE because I think that existing metrics are better. Many of them are crummy – and so is Step 1. I support a pass/fail USMLE so that we can reboot our residency selection processes and actually use metrics that matter to identify talent.

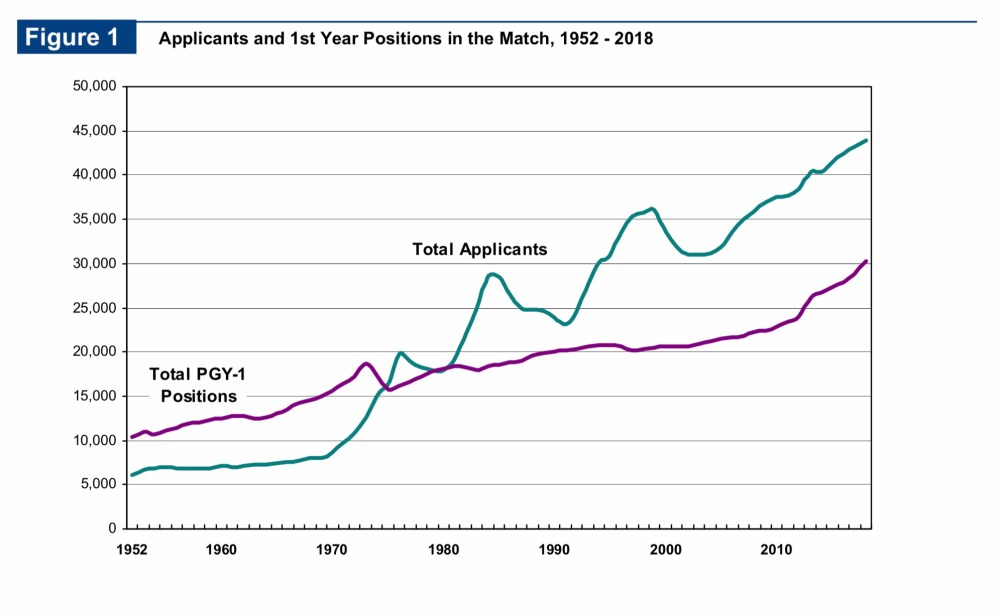

Third, it is important to remember that the root of the residency competition problem is that we have more residency applicants than we have residency positions. Here, for example, are data from the most recent NRMP Match report:

The mismatch between applicants and positions is absolutely a problem that deserves attention. But recognize that nothing that we do with Step 1 scoring is going to fix it. Until or unless there is a perfect match in residency positions and the people seeking them, there will always be some element of competition for the most desired spots.

I understand that there many students out there who would rather “compete” over the USMLE Step 1 score than some other metric. I get it. It’s the devil we know.

But like I’ve pointed out before, if you only consider payoffs to individual applicants, residency selection policy is a zero sum game. If we use Step 1 scores, then Student X benefits and Student Y goes unmatched. If we use something else, maybe the outcome changes – but one student’s gain is another’s loss.

However, if we move beyond the individuals and instead consider how residency selection policy affects society, it is clear that it is not a zero sum game. Some metrics that could be used to pick residents will result in more net benefit to society than others.

What, exactly, does society gain by a student putting in a few hundred extra hours to increase their Step 1 score from 245 to 265? Precious little, I’d say. The educational return on investment for most things tested on Step 1 is poor, especially as scores increase. Even individual students whose residency applications benefit from high Step 1 scores are still losing out, because the countless hours put into test prep could have been spent on something else.

Remember, medical students are some of our very best and brightest. Do we really think that studying for Step 1 is the best way to apply their talents?

__

Q: I’m a residency program director. My program receives XXXX applications for only X spots. Without a Step 1 score, how can I figure out who to interview?

Sigh.

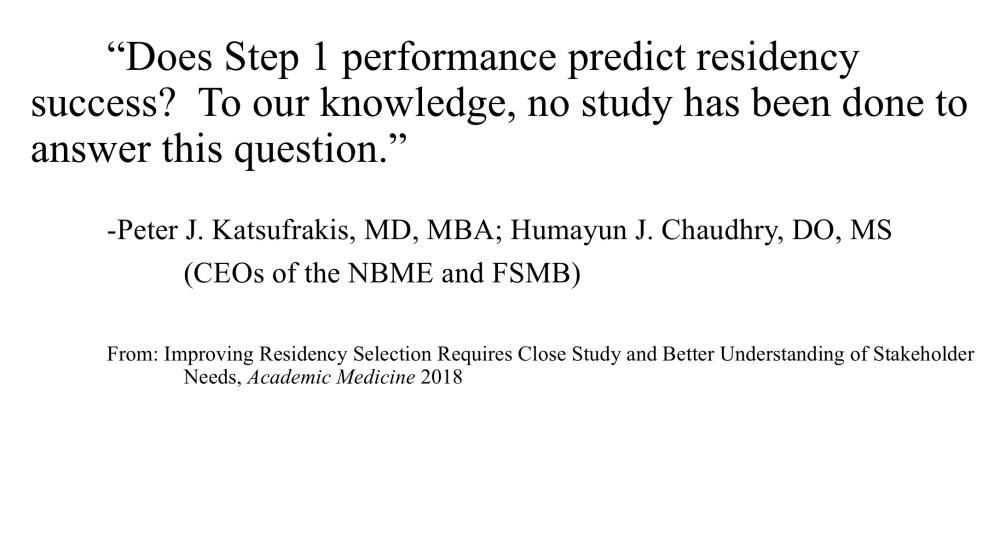

First, let’s note that this argument supposes that Step 1 scores are actually useful in finding the best residents. What evidence do we have that Step 1 scores actually identify the best residents?

Not much. In fact, this topic was recently expertly reviewed by the presidents of the NBME and FSMB. (I mean, if anyone ought to know the data on the value of Step 1 scores in residency selection, it would be the guys who sell it to you in an essay arguing for the maintenance of the status quo, right?) And yet they make this acknowledgement:

So in light of that, let’s turn the original objection around a bit.

If there are no data demonstrating that Step 1 scores are useful for identifying good residents…. then why should it matter what you use to screen residents for your program? Throw darts, draw names out of a hat, use a random number generator, whatever. For all we know, any of these methods would work equally as well, right? Losing Step 1 scores for screening should be no big loss.

Now that retort – while logically correct – trivializes the real problem that residency directors have triaging applications. The number of applications per candidate has risen steeply in recent years, as I highlighted here.

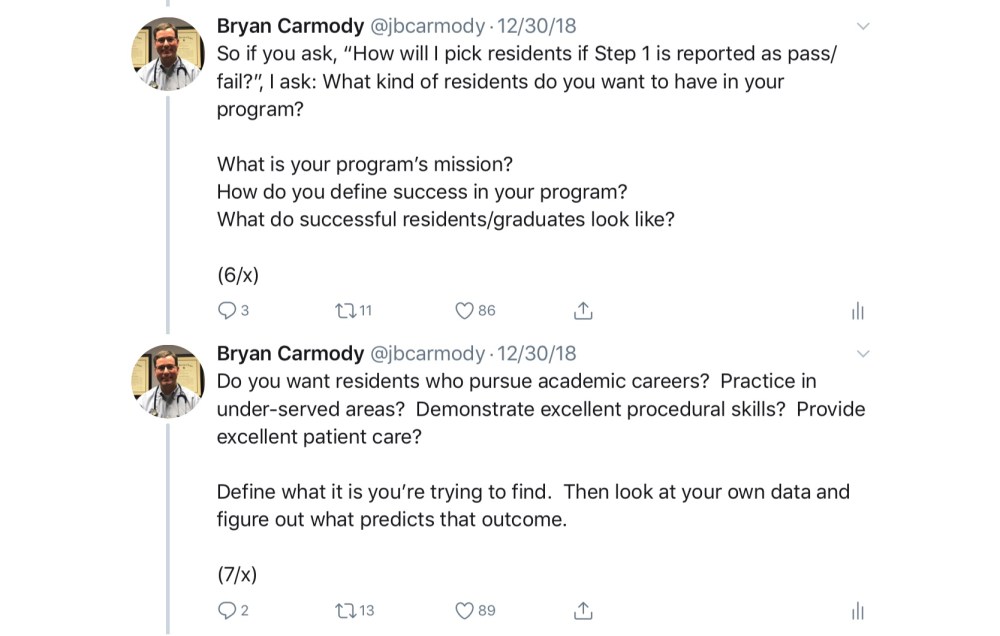

But instead of using a test that was never intended to do the thing that we’re using it for (and probably does a poor job at doing that thing anyhow), why don’t we try measuring things that actually matter in predicting the outcomes we desire?

The answer, of course, is that measuring other things is hard.

That doesn’t mean we shouldn’t try.

When I interview candidates for our residency program, I get a copy of their ERAS application – which easily runs to 20 pages in printed length. You can’t tell me that there isn’t enough data there to discern quality candidates without a Step 1 score.

Don’t have time to read the application? Then get help from people who do. Or define what your priorities are and have a computer search it out for you. (Betcha that with a little machine learning, you could even get the computer to spit out a three-digit score in the 200s if that’s really what you need.)

It’s time to be more creative and thoughtful about how we evaluate candidates. I’ve opined about this before, and I’ll spare you the full reprise right now.

My point here isn’t to convince you of the merit of a particular method for triaging applications. My point is to empower you to recognize that if you are a program director, you are in the best position to know what predicts success in your program or specialty – not me. And not the NBME, either.

We owe it to our students and their patients to cultivate and create a culture that rewards traits and achievements that truly benefit society. The problem is, the presence and ready accessibility of Step 1 scores makes it too easy to avoid this hard work. So define residency success. Measure residency success. Study residency success. Stop outsourcing your responsibility to the NBME.

__

Q: I am also a residency program director. I would like to point out that USMLE scores predict board licensure – and if my graduates don’t pass their boards, my program gets shut down.

There is some truth here – but let’s separate fact from fiction.

Fact – Poor board passage rates can jeopardize a residency program’s accreditation.

Fiction – USMLE Step 1 scores are the only (or the best) way to identify residents at risk of board failure. (I mean, isn’t that the whole purpose of the in-training exam? Studies like this and this leave me scratching my head. Since when is predicting the results of the in-training exam a valid goal in and of itself?)

Fact – USMLE Step 1 scores are correlated with board passage. But I’ll bet the magnitude is less than you think. The coefficient of determination for the studies linked above is ~25%. In other words, Step 1 scores explained around a quarter of the differences in the in-training exam scores. Hardly a certainty.

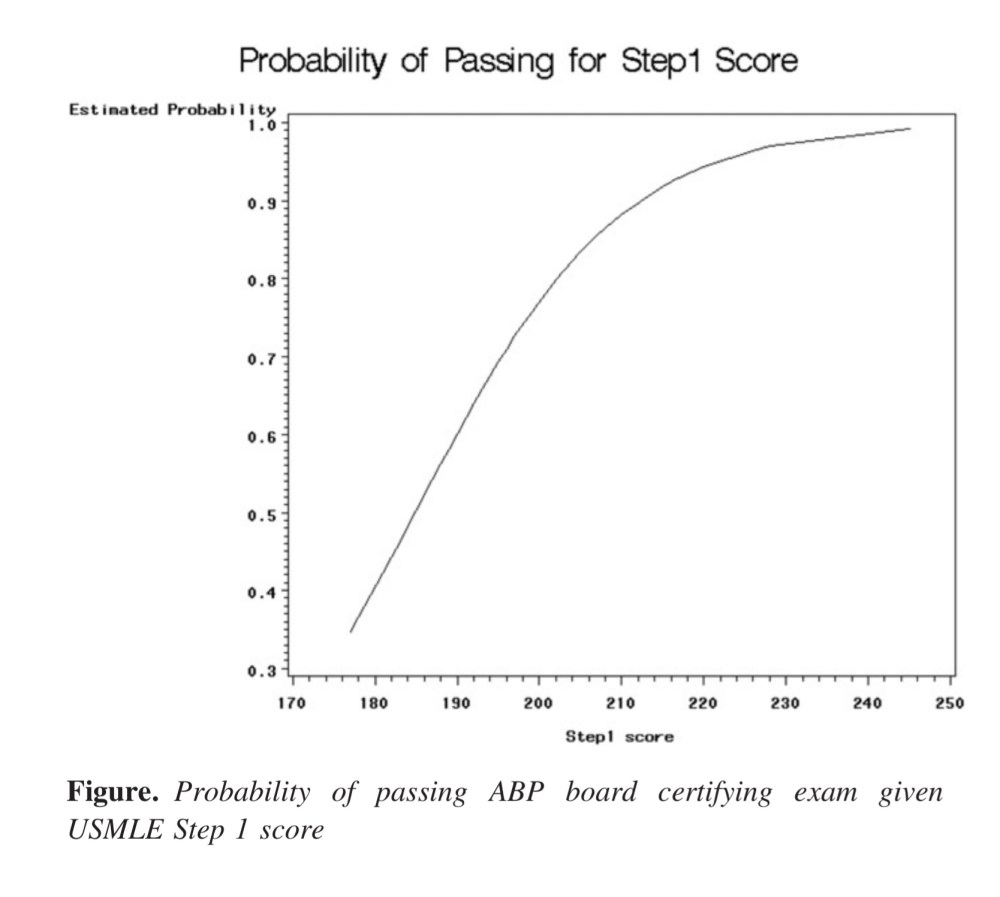

Fact – We use board scores for candidate selection in ranges that well exceed their usefulness in predicting board passage. Take, for instance, these data from pediatric residents. Once an applicant has a score north of 210, the chance of passing the American Board of Pediatrics certifying exam is 90%… and there is no Step 1 score that predicts a 100% board passage rate. (Similar data exist for other specialties – as I reviewed here.)

Fact – Even if it were the case that a Step 1 score perfectly predicted board passage, residency programs should take pride in their educational capabilities. Good programs should add value, not just slap themselves on the back for having the acumen to pick residents whose metrics suggested they would achieve board passage regardless of their program experience. If a candidate fits your mission and values, you should have enough faith in your ability as an educator to get them across the finish line.

__

Q: I am a basic scientist. Your plan will result in the removal of much of the basic science content presently tested on Step 1. Since the time of Flexner, an understanding of basic science has been deemed essential for physicians. Without basic science, what knowledge will separate doctors from PAs or NPs?

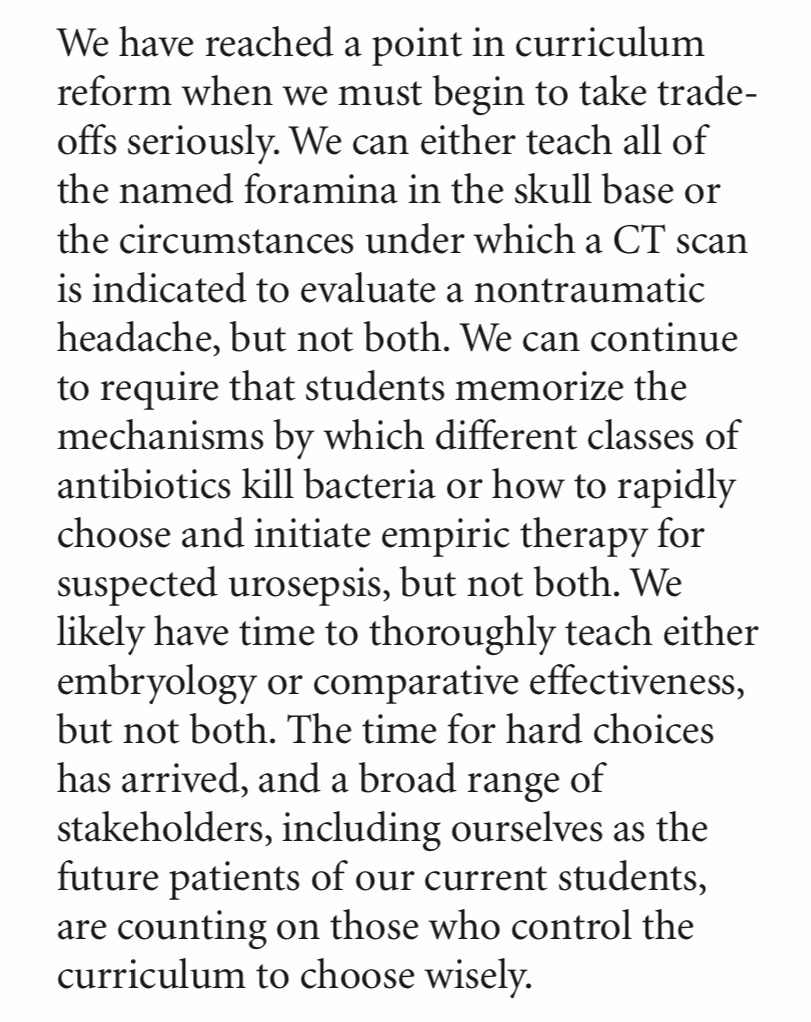

The fallacies in this argument (and related claims) have been laid bare by Vinay Prasad, and I have little to add to his commentary on this issue.

(mic drop)

(scampers back to mic)

Oh wait, I actually I do have one more thing. This:

__

Q: I am a doctor. You say the basic science on Step 1 is not clinically relevant. However, once I diagnosed someone with [insert rare condition here], which I learned about while studying for Step 1. What say you now?

The problem with this is twofold.

First, it assumes that you wouldn’t have learned this useful piece of information in some other way if Step 1 were pass/fail.

Second, it ignores all of the patients with [insert some other condition] whose diagnosis you missed, because you were so busy studying for Step 1 that you lost opportunities to learn knowledge or skills that would have been even more valuable.

In theory, all medical or scientific knowledge has value. The question is, are we teaching the most valuable knowledge?

__

Q: I am a patient. I don’t like the sound of a pass/fail test. It sounds like we’re “dumbing down” medical education. I want medical schools to graduate the best doctors, not just minimally competent ones.

I do, too.

The faulty assumption here is that studying for Step 1 makes you a better doctor. It does – to a point. But we have long since passed the point of diminishing returns.

We don’t make better doctors by pushing our students to run harder and harder on the Step 1 hampster wheel. We make better doctors by teaching and evaluating things that actually impact patient care. Right now, that’s not where our priorities lie.

To the extent that licensure examinations exist to protect the public, we should honor that by aligning the exam content with things that matter to patients. And to the extent that physicians value their own self-governance with medical boards, we should inform licensure decisions with metrics that are valid, not just ones that look like they are because there’s a number attached.

__

Q: I am a doctor. I’ve been in practice for a few years. When I was a student, I took the USMLE. I studied hard, learned some useful things, matched in my chosen field, and now take good care of my patients. Things turned out okay for me. I just don’t see what all the fuss is about.

If you are a physician who is not currently involved in preclinical medical education, you may be surprised by how much things have changed since you took the USMLE – even if you took it fairly recently. This opinion is informed by my own experience.

I took Step 1 in 2005. I remember not even thinking much about Step 1 until the last few months of second year. After my finals at the end of May, I took a couple of days off to clear my head. Then, I studied for about 2 weeks, took Step 1, and went on to my clerkships. It wasn’t the most fun time of my life, but it was hardly the worst thing I’ve ever gone through.

Flash forward to 2016, when I took on an official teaching role at my medical school. Pretty quickly, it became apparent to me that since I had taken the exam, preparation for Step 1 had entered a malignant phase.

When I walk around my medical school, I see students:

- Carrying First Aid for the USMLE Step 1 from the first week of class

- Frantically studying Pathoma for the last 30 seconds before a lecture begins

- Answering UWorld questions on their phone as they walk down the hall

- Working through their Anki deck of pharmacology trivia during required lectures on topics relevant to the real-life practice of medicine, but not tested on Step 1

- Struggling to mentally stay afloat amidst the realization that, at any given moment that they are not studying, someone else is – and there aren’t enough residency spots for everyone

But don’t accept my anecdotes. Look for yourself at the rising mean Step 1 scores; the explosion in growth of the for profit test prep industry; declining class attendance; disengagement from any educational activity not deemed “high yield” for Step 1; or increasing burnout in students who aren’t even halfway through medical school.

The problem isn’t the students. They are smart, efficient, and single-minded in pursuit of the goal we’re telling them is so important.

The problem is their environment. Today’s students are squeezed more and more by an educational system that has been perverted, where memorizing basic science trivia has become prioritized over learning to be a doctor in the truest sense of the word.

Students have described this as the “Step 1 Climate” – and in it, there is no doubt that climate change is occurring. In fact, the temperature increases a little every year, and it’s getting pretty stifling. The fact that people like me don’t remember it being so hot back in the day is a poor excuse for not looking at the thermometer now.

__

Q: I am a highly compensated executive for the National Board of Medical Examiners. Making Step 1 pass/fail could decrease my organization’s revenues, reversing decades of steady growth. Do you think this will please my Board?

Nope, I guess not. Objection sustained.