There’s a persistent objection to making USMLE Step 1 pass/fail that goes something like this:

Dear Sheriff,

When you suggest that we should make USMLE Step 1 pass/fail, you overlook one very important thing. See, I’m a program director, and if my residents don’t pass their boards, my program will get shut down. Whatever failings they have, USMLE scores predict board passage. We need to keep them.

Sincerely,

A program director

__

This assertion is made frequently in conversation and on social media. But even more insidiously, it’s often made in the academic literature, with little superscript numbers lending authority to claims about the high correlation between USMLE scores and board certification exams.

The NBME has also highlighted this argument in advance of InCUS, where the future of USMLE will be determined in just a few short days.

In the past, I pulled the references on the NBME’s claims about USMLE correlation with “improved practice.” I was unimpressed.

Today, let’s do the same thing with this oft-repeated claim about specialty board certification. Is it true? Or just another piece of USMLE mythology?

Let’s go to the literature…

Let’s start with my own field: pediatrics.

Pediatrics

McCaskill QE, Kirk JJ, Barata DM, Wludyka PS, Zenni EA, Chiu TT. USMLE Step 1 scores as a significant predictor of future board passage in pediatrics. Ambul Pediatr 2007; 7(2): 192-195.

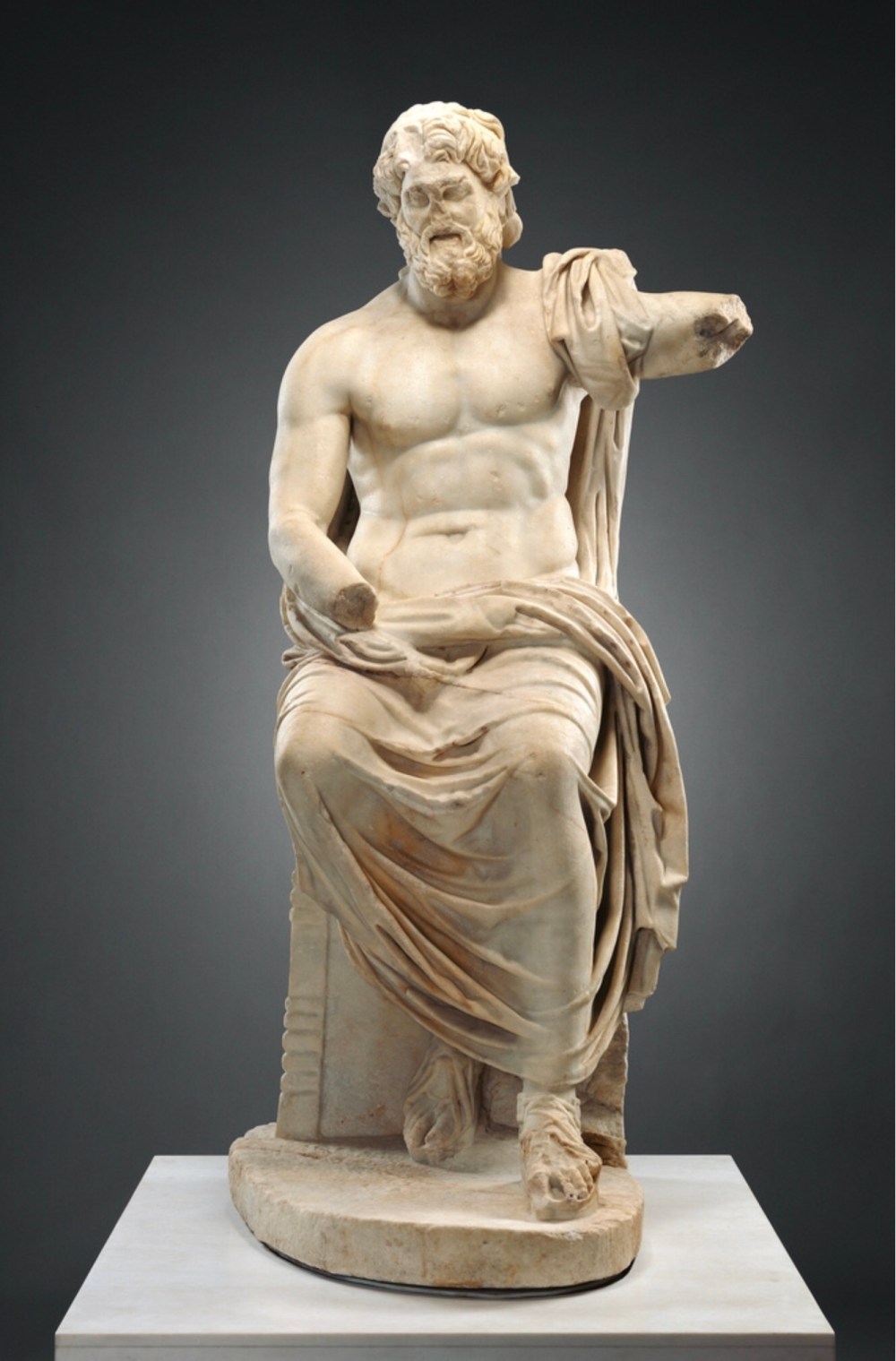

As the article’s title asserts, Step 1 scores did indeed predict board passage. The relationship is strong, as shown below:

But look closely at both the x and y axes, because there are two important things to note:

But look closely at both the x and y axes, because there are two important things to note:

- Once the Step 1 score is >210, the probability of passing the American Board of Pediatrics certifying exam is ~90%.

- There is no Step 1 score that portends a 100% ABP exam pass rate.

Hmm…

These data don’t exactly fit the usual narrative about why programs directors need Step 1 scores. Most applicants have a similarly high rate of board passage, even with Step 1 scores that are below average, and the only candidates who are more-likely-than-not to fail the ABP exam are ones who failed Step 1.

But this is just one specialty. Would this hold up in another discipline? Let’s look at the field with the largest number of residents…

__

Internal Medicine

Kay C, Jackson JL, Frank M. The relationship between internal medicine residency graduate performance on the ABIM Certifying Examination, yearly in-service training examinations, and the USMLE Step 1 examination. Acad Med 2015; 90(1): 100-104.

In this study, residents who failed Step 1 had twice the risk of failing the American Board of Internal Medicine Certifying Exam (ABIM-CE), and 99% of residents who had USMLE Step 1 scores >211 passed the internal medicine boards.

But these are medicine boards, and everybody knows surgical boards are tougher, right? Surgical PDs must really need Step 1’s crystal ball to keep their programs afloat. So let’s look at…

__

General Surgery

Shellito JL, Osland JS, Helmer SD, Chang FC. American Board of Surgery examinations: can we identify surgery residency applicants and residents who will pass the examinations on the first attempt? Am J Surg 2010; 199(2): 216-222.

Among surgery residents with Step 1 scores over 200, 85.7% passed the surgical boards on their first try.

Now, if that’s not a high enough board pass rate for your program, then you could limit yourself to only applicants in the top quartile of Step 1 scores: 87% of them passed on their first try.

The paper does not include data for any groups higher than the top quartile, so let’s go with that. Using a number needed to treat type of calculation, if you limited your program to only residents with top-quartile scores instead of just those with Step 1 >200, all you’d need to do is train ~77 surgeons in order to bask in the satisfaction of having one extra resident who passed the boards on his/her first try. The math shows it’ll work – it just might take a while.

__

OB/GYN

Armstrong A, Alvero R, Nielsen P, Deering S, Robinson R, Frattarelli J, Sarber K, Duff P, Ernest J. Do U.S. Medical Licensure Examination Step 1 scores correlate with Council on Resident Education in Obstetrics and Gynecology in-training examination scores and American Board of Obstetrics and Gynecology written examination performance? Mil Med 2007; 172(6): 640-643.

In this study, all residents with USMLE Step 1 score >200 passed the American Board of Obstetrics and Gynecology examination.

So we’re now four disciplines into our literature review, and we have yet to find evidence that USMLE scores predict board passage in the higher ranges (where most candidates’ scores fall, and where Step 1 scores are most often used for candidate selection). So far, it doesn’t look like Step 1 scores add much for the average program.

But is the relationship different for highly competitive specialties, as some have argued? Maybe that’s where having really high Step 1 scores matters. So let’s look at…

__

Orthopedics

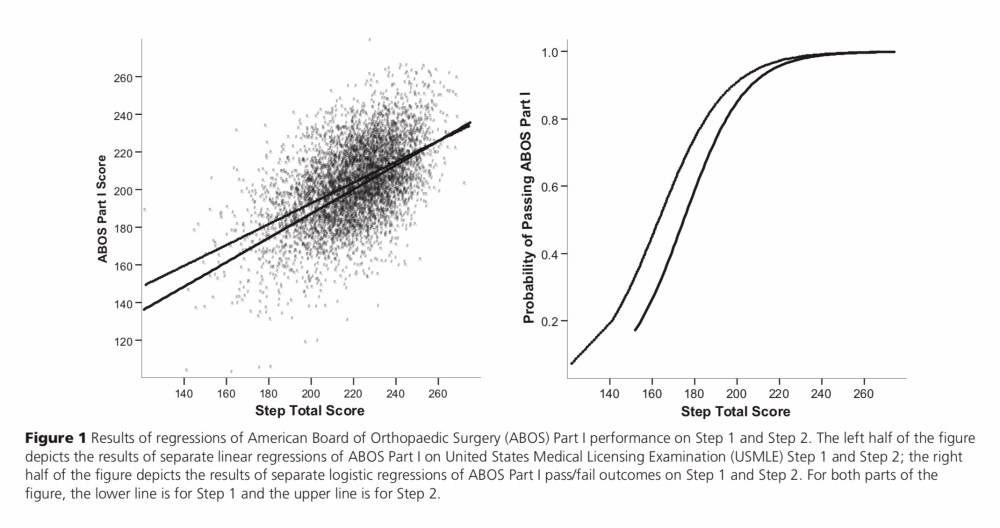

Swanson DB, Sawhill A, Holtzman KZ, Bucak SD, Morrison C, Hurwitz S, DeRosa GP. Relationship between performance on Part I of the American Board of Orthopaedic Surgery Certifying Examination and Scores on USMLE Steps 1 and 2. Acad Med 2009; 84(10 Suppl): S21-S24.

The abstract looks promising – just look at that strong conclusion!

However, the article also includes this figure, which looks eerily similar to those we’ve seen before.

However, the article also includes this figure, which looks eerily similar to those we’ve seen before.

Here, once you hit a Step 1 score of 205, you have a 90% chance of passing the American Board of Orthopedic Surgery (ABOS) Part 1 exam, with diminishing returns thereafter. The only residents who have a >50% chance of failing the boards are ones who initially failed Step 1.

(By the way, this article was not written by orthopedic surgeons – it written by analysts from the National Board of Medical Examiners, which explains the data interpretation and conclusion expressed in the abstract. However, if you need a metric to predict scores on the orthopedics in-training exam, there was this study – which found an association between the SAT and in-training scores.)

Should we keep going? What the heck, let’s do one more…

__

Anesthesiology

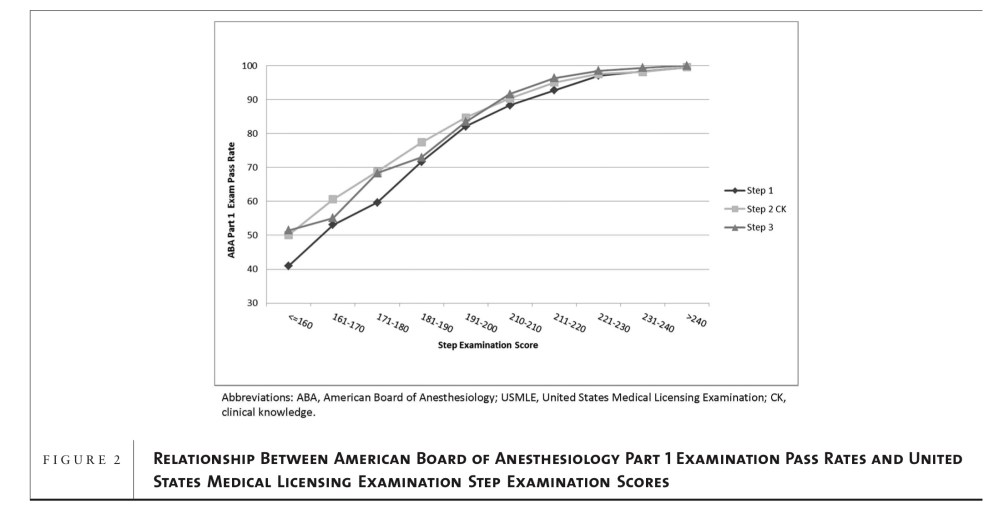

Dillon GF, Swanson DB, McClintock JC, Gravlee GP. The relationship between the American Board of Anesthesiology Part 1 certifying examination and the United States Medical Licensing Examination. J Grad Med Educ 2013; 5(2): 276-283.

This one was also put together by the NBME. So it should not surprise you to learn that, after noting the correlation between USMLE and American Board of Anesthesiology (ABA) Part 1 scores, their conclusion was that this association “provides support for the validity of both testing programs.”

For now, let’s ignore the circular logic, and just look at the data:

Again, an applicant with a USMLE Step 1 score >210 has a >90% chance of passing the anesthesiology boards on the basis of that metric alone. And again, for a program director to look at given candidate and predict that he or she has a better than 50% chance of failing the boards, that candidate would have had to fail Step 1 initially.

__

CONCLUSIONS

Considering all of the data – across 6 disciplines, in surgical and non-surgical fields, including data from regular researchers and the NBME – the following conclusions are apparent.

1. Yes, there is an association between USMLE Step 1 scores and board passage rates.

2. The relationship between Step 1 scores and board passage is nonlinear.

3. We use Step 1 scores for screening and candidate evaluation in ranges well beyond those that meaningfully predict board passage. Step 1 scores over 200-210 predict board passage with high accuracy.

Back to the original argument…

The argument that we need Step 1 scores to predict board passage and keep our residency programs accredited just doesn’t hold water.

For one thing, it’s not well-supported by the data, as shown above.

For another, the argument assumes that program directors have no way to identify candidates than who will pass the boards other than the Step 1 score. That claim is hard to defend. I’m pretty sure there are other things in the application that could help you identify applicants with a high likelihood of certifying exam failure.

Third, and worst of all, the argument seems to assume that once you’ve taken a resident whose board scores predict a lower-than-ideal board passage rate, there’s nothing you can do about it. “Alas! We matched a resident who scored 199 on Step 1! The die has been cast! Our accreditation is lost!”

That kind of logic is not only false, it’s offensive. As I’ve written before, residency programs should take pride in their educational capabilities. Good programs should add value, not just slap themselves on the back for having the acumen to pick residents whose metrics suggested they would achieve board passage regardless of their program experience.

Remember, we do actually have a test that’s specifically designed to identify residents at risk of board failure. It’s called the in-training exam. Residents with low scores need extra attention and teaching. But then again, that’s kinda what we’re here to do. It’s a residency education program, not indentured servitude.

Thing is, you only give the in-training exam after a resident has matched. You can’t use it as a selection metric. But if an applicant fits your program’s mission and values, you shouldn’t be afraid to match them – you should have enough faith in your ability as an educator to get them across the finish line.

__

Let’s be honest with ourselves for a minute.

Step 1 Mania is not driven by students trying to get their Step 1 score over 200-210 so that they can establish their ability to achieve board certification. And most program directors are not using Step 1 scores for candidate evaluation in a way that is consistent with the data.

We’re all believers in the myth that Step 1 scores mean more than they do.

There’s no shame in that. I used to be a Step 1 believer myself… until I started looking at the data.

YOU MIGHT ALSO LIKE:

Step 1 Mania: The Case for #USMLEPassFail