Back in 2019, as part of my quest to convince whoever will listen that USMLE scores aren’t as useful for residency selection as we act like they are, I wrote about how many points each USMLE Step 1 question is worth. I hypothesized that passing USMLE Step 1 probably required answering around 65% of questions correctly – which suggested that each correctly-answered question thereafter was worth around 1 point.

In discussing this, I was pretty honest that some of my calculations were based on assumptions and guesswork. After all, the USMLE does not provide precise details on how they calculate the three digit score.

But lately, I happened upon a piece of data that helps shed some light on this issue for a related exam.

The Rosetta Stone

The key piece of information to help us translate percentages to USMLE three-digit scores comes from this paper:

A paper written by NBME authors contains data that helps us convert percentages of items answered correctly to USMLE three-digit scores.

The paper is written by psychometricians with the goal of addressing something completely different: how many incorrect answer choices should be included with multiple choice questions? Their point is that, on an exam where most questions are answered correctly, an answer choice that is chosen by <5% of test-takers may still serve a useful role as a distractor. And I guess that’s a good point, as far as it goes.

But what’s more interesting is the dataset they used to make this point.

See, they used data from “an examination for physician licensure.”

A “high stakes” examination.

An examination with “approximately 320… test items.”

Wait a second… this is sounding kinda familiar…

The exam described in the Raymond et al. paper sounds a lot like USMLE Step 2 CK.

The authors note that on this high-stakes medical licensing test with approximately 320 questions (read: USMLE Step 2 CK), the mean percentage of five-answer multiple choice questions answered correctly ranged from 73.4% to 74.8%. And the standard deviation was 8.3% to 8.9% across the various test forms.

This, of course, is a very interesting piece of data that we can use to approximate how many questions must be answered correctly to pass USMLE Step 2 CK.

The general method

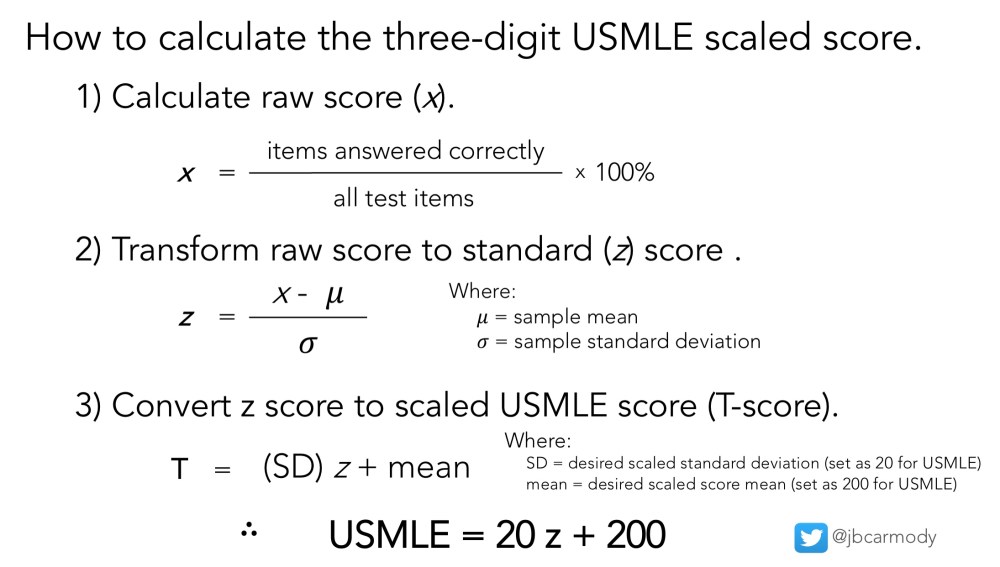

As I’ve discussed elsewhere, the three-digit USMLE scaled score is just a T-score.

The three-digit scaled score is just a linear transformation of the z (standardized) score.

The trouble is figuring out what values to plug in for the variables in the equations. Although I was able to work out the relationship between the raw and the scaled score for early versions of the USMLE, I hadn’t stumbled on the necessary data to do this for contemporary versions of the exams. Until Raymond et al laid it all out there for us.

Plugging in the numbers

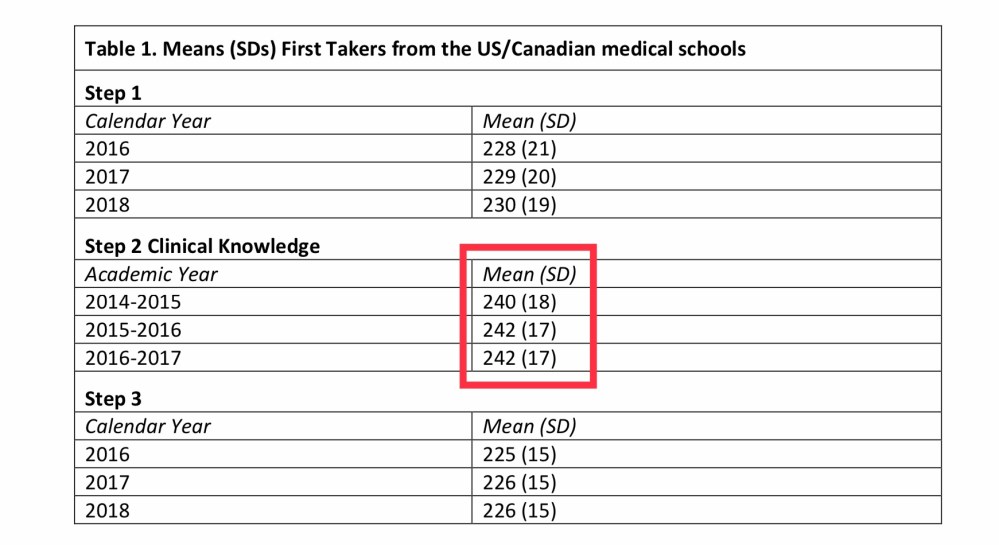

See, from the USMLE’s Score Interpretation Guidelines, we already know the mean and standard deviation for recent administrations of the Step 2 CK exam.

Lately, the mean Step 2 CK score has been 242 with a standard deviation of 17.

And we also know that the overall distribution of Step 2 CK scores looks like this:

The distribution of USMLE Step 2 CK scores.

Note that, although the distribution of Step 2 CK scores is not perfectly normally distributed, it’s probably close enough to treat it as such. And if we do, we can use the formulae above to generate a scale that looks like this:

Approximate conversion between raw score and three digit score for USMLE Step 2 CK.

In other words, to pass USMLE Step 2 CK, you only need to answer around 57% of items correctly.

Notice that the conversions are approximate, and differ slightly from the real distribution of scores reported in the USMLE’s interpretation guidelines.

How many questions must be answered correctly to pass?

To answer this question, we have to know how many of the 300+ questions on Step 2 CK are unscored experimental questions. It used to be that this was a matter up for some debate. But then, back in June 2020, the NBME inadvertently gave us the answer.

Until they were excoriated on Twitter, the NBME proposed allowing some examinees to take a version of the Step 1 or Step 2 CK exams without experimental items. This would have resulted in a USMLE Step 2 CK with just 240 items, meaning that ~60 items – or 20% of the standard test – were experimental.

In other words, with just 240 scored items, and just ~57% of items that must be answered correctly, an examinee needs only to answer around 137 questions correctly to pass the exam.

Uh… so why does this matter?

If we’re gonna use USMLE scores for residency selection – a purpose for which these tests were not designed – then we should all understand exactly what we’re talking about when we’re talking scores above the passing threshold. It’s great that Applicant A scored a 233 and Applicant B scored a 250 on Step 2 CK – but what does that actually mean?

Well, if I’m right, it means that, over the course of a 9 hour testing day, Applicant B correctly answered around 35 more questions correctly. How should we interpret that?

Here’s where randomness comes into play. To be confident that two Step 2 CK test-takers really have difference in knowledge, their scores need to be more than 18 points apart (two standard errors of the difference for the test). So Applicants A and B might actually have exactly the same clinical knowledge – Step 2 CK can’t tell us for sure.

And even if two applicants scores were far enough apart that we could be confident that their performance differed, is that difference meaningful? What, exactly, does a 20 point difference mean? What percentage of questions do you need to get right to be a successful resident or practicing physician? (As a sidenote, we already know how many questions you need to get right to be a successful faculty member. When faculty “content experts” – the same experts who set the USMLE minimum passing score – took a block of USMLE Step 2 CK questions under standard conditions, they only got 67% of questions right.)

This is why the NBME should be more transparent about the way that 3 digit scores are calculated.

Look, as fun as it is to reverse engineer the USMLE score from the little trail of breadcrumbs they’ve left for us, if we’re going to use scores to make life-altering decisions, we need to do better than the NBME’s black box – we need to all have a firm understanding of how the score is calculated so we can interpret it in a sensible and evidence-based manner.

YOU MIGHT ALSO LIKE:

Why can’t I re-take USMLE Step 1?