The NFL Draft will take place April 25-27, 2019. Being selected to play football at the highest echelon of the sport is obviously very different than matching into a residency program. And yet, amidst the breathless analysis, hot takes, and bold predictions by talking heads on ESPN, I can’t help thinking about residency selection.

See, in selecting players for the draft, NFL teams must overcome many of the same challenges that residency programs face.

- Each team has only a few draft picks. To fill each slot, they may have to evaluate hundreds of candidates.

- Teams want to draft the best possible talent – but they also have specific needs and priorities. Some teams want to find a wide receiver with breakaway speed; others, a pass-rushing defensive lineman. A ‘good draft’ looks different for each team.

- Players want to be selected highly in the draft, because the higher a player is drafted, the higher his salary. (I would argue that the student competition for “top” residency programs and selective disciplines is driven by similar forces.)

- Because each NFL team has a limited number of draft picks, the cost of a bad pick is high. Similarly, matching one bad resident puts a drag on the entire program; matching a few bad residents can put the entire program in jeopardy.

- Team executives who draft poorly don’t last long – just like program directors who have a poor Match.

- And finally, just like residents, potential NFL players come from a variety of different backgrounds – which often prevents direct comparison and makes identifying the best talent difficult.

This last point is especially salient to the #USMLEPassFail debate.

As I’ve discussed in the past, one of the most persistent objections to changing Step 1 to pass/fail is the concern that it will disadvantage talented students from ‘lower tier’ medical schools. Many of these students feel that a high Step 1 score is the only way that they can distinguish themselves to residency programs.

I’m skeptical that a scored Step 1 benefits students from ‘lower tier’ medical schools and international medical graduates (IMGs) as much as these groups often seem to believe. (Students from the most prestigious medical schools do very well on Step 1 – and if you look at the data without any preconceived notions, you might actually conclude that Step 1 scores are one of the most important factors maintaining the hierarchy in residency selection.)

But for today, let’s take this objection at face value – because it raises a number of bigger questions about the metrics we use to choose residents. Inspired by the maelstrom of football talent evaluation this week, let’s find out – what can we learn from the NFL Draft?

1) Do we need a single objective metric?

Defenders of a scored USMLE point out that Step 1 is the only objective metric with which to compare candidates from a variety of backgrounds.

How do NFL teams overcome this? While the colleges that produce the most NFL players are the ones you’d expect, even a casual NFL fan knows that some of the best players came from less-than-prestigious college backgrounds.

All Pro linebacker Khalil Mack was selected 5th overall in the 2014 draft after playing for the University of Buffalo – not exactly a hotbed of football talent. Hall of Famer Ben Roethlisberger was the Steelers’ first round draft selection in 2004 after playing for Miami University (no, not that one… the one in Ohio). Eagles QB Carson Wentz – the #2 pick in 2016 – played for perennial powerhouse North Dakota State.

NFL players don’t have to be licensed – so unfortunately, there’s no such thing as the NFLLE Step 1. Otherwise, drafting players would be a lot easier, because NFL executives could just use the player’s performance on a single test on a single day, compute a single three digit number that would predict the player’s entire playing career, and then take the rest of the day off. Right?

However, most NFL prospects do participate in the NFL Combine – a test of various physical skills performed in front of NFL coaches and talent scouts in the weeks leading up to the draft.

Notice that, although the tests are objective, there’s not just one of them – there are several. Why?

Because scouts recognize the limitations of a single metric, and appreciate that what predicts performance is position-specific.

After all, the skills needed to be a good quarterback are very different than those needed to be a linebacker. A scout is more interested in a wide receiver’s 40 yard dash or vertical jump more than his bench press. This brings us to our first lesson, which is this:

Lesson #1 – If you’re interested in predicting multiple competencies, you probably need multiple metrics.

And is medicine not like football? We’re all playing the same “sport,” but the skills required for success vary widely across specialties. Why do we think that a single test efficiently measures these?

_

2) What makes a useful metric?

The tests performed at the combine are clearly relevant to the game of football. Speed, power, muscular endurance, explosiveness are all important attributes – and in a highly competitive environment, even a small advantage in one of these could be the difference between victory and defeat. Right?

The problem is, all of these metrics are also imperfect surrogates for actually playing football. I’m not sure who will win next year’s Super Bowl, but I am confident that the game will not be settled by the teams squaring off and performing a 3 cone drill or seeing who bang out the most reps on the bench press.

The objectivity of the tests at the Combine is appealing – but when you use imperfect surrogates to predict performance in a complex endeavor like playing football, you have to take them with a grain of salt.

For instance, the marquee event at the Combine is the 40 yard dash. The second-fastest verified 40 yard dash of all time – a blazing 4.24 – was run in 1999 by Rondel Menendez.

Wait, you don’t remember Rondel Menendez? I don’t, either. He never played a single regular-season NFL game, and was out of the league by 2001. And despite his impressive Combine performance, he was drafted 247th overall. See, NFL scouts know the limitations of these simple surrogates.

What you really want are metrics that approximate the complex performance that you are trying to predict.

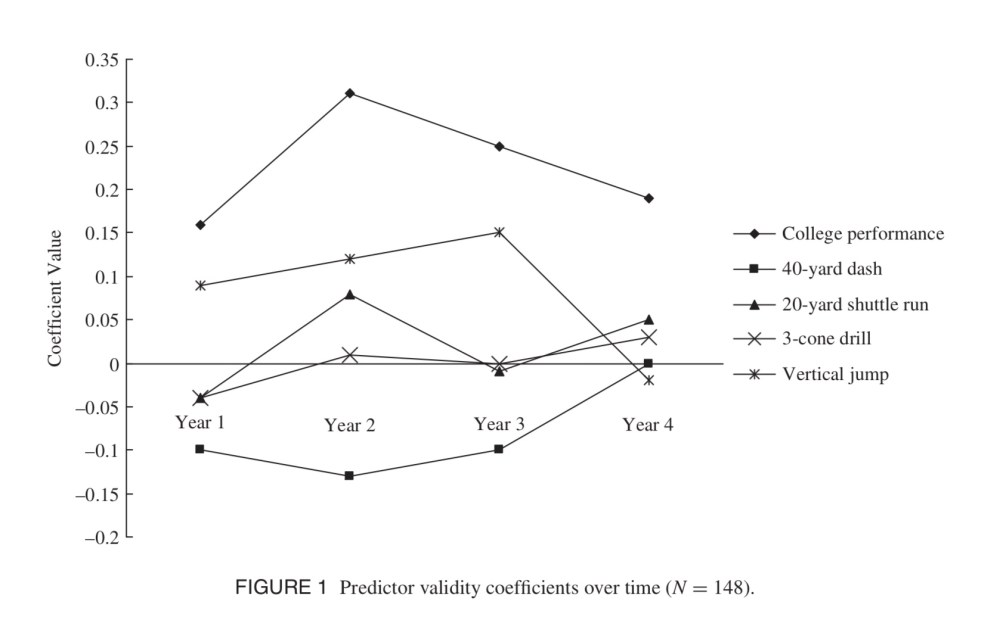

So in one study, researchers considered what best predicted success in the NFL – the physical tests at the NFL Combine, or the player’s college performance. As shown below, the single physical tests had marginal utility for predicting NFL success, and only past performance remained a valid predictor after four years in the league.

Imagine that! The best way to figure out who is gonna be a better football player is not a single test – even a test that approximates some of the physical skills that are directly relevant. The best way to figure out who’s gonna be a good football player is to actually watch people playing football.

Lesson #2 – The better a test approximates the task you’re trying to predict, the better a predictor it is.

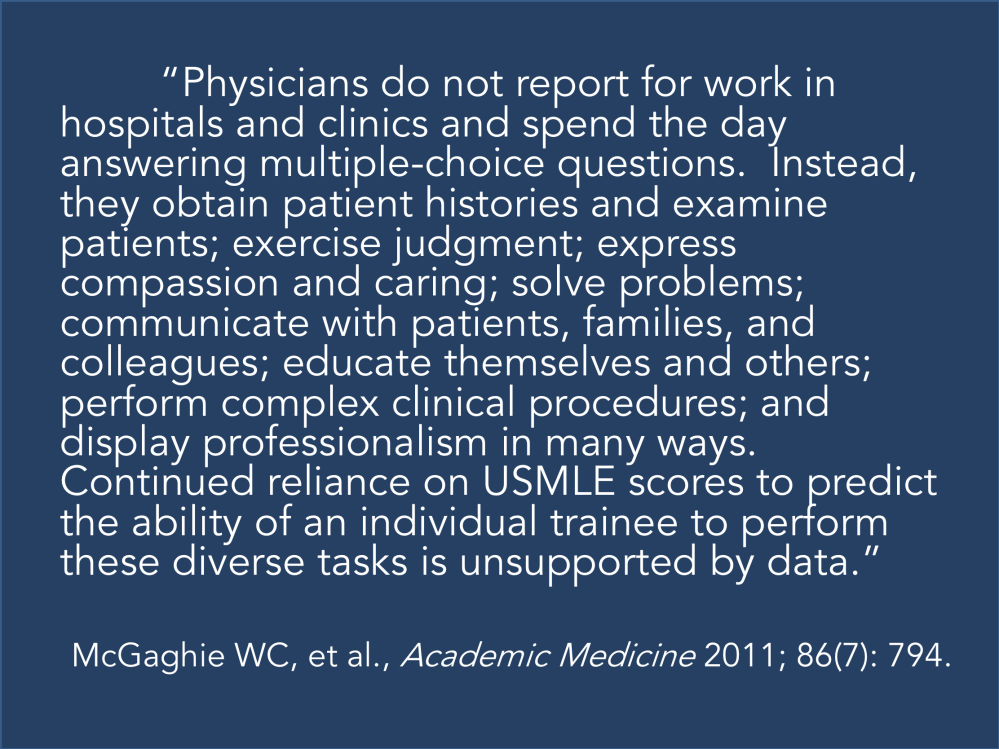

Performance on the USMLE Step 1 measures… something. But that competency is only peripherally relevant to the actual practice of medicine.

As has been eloquently stated by others, real doctoring doesn’t involve multiple choice questions:

_

3) What do numeric metrics really tell us?

While the 40 yard dash is usually the most intensely-watched event at the NFL Combine, there’s another test that gets quite a bit of press: the Wonderlic.

The Wonderlic is a test of cognitive ability. Most of the questions are relatively simple, but some are challenging, and there is significant time pressure. (If you want to see how you’d fare, you can take a 10 question sample Wonderlic here.)

The Wonderlic is most frequently used in the evaluation of quarterbacks – who in the modern NFL must memorize lengthy playbooks and be able to recognize complex defensive schemes at a glance.

It turns out that if you plot Wonderlic scores by QB passer rating, there is a positive correlation between the cognitive test and performance on the field, with every 1 point increase in Wonderlic score corresponding to a 0.2 point increase in passer rating. Indeed, many of the NFL’s best quarterbacks have scored highly on the Wonderlic. Aaron Rodgers scored a 35, and Tom Brady a 33 – scores that are higher than the average chemist or electrical engineer.

Still, the scores are not perfectly predictive. Journeyman QB Ryan Fitzpatrick scored a 48 (out of 50) – one of the highest scores of all time, and twice as high as current Offensive Player of the Year Patrick Mahomes.

Is it surprising that an IQ test is not an ideal predictor of QB performance? Of course not. But there’s a bigger lesson here.

When you closely examine the relationship between Wonderlic scores and QB passer rating mentioned above, careful analysis shows that the “linear” modeling is really driven by a clustering of points in the lower left quadrant of the plot. In fact, the real relationship is really more of a threshold effect. No NFL QB with a Wonderlic of 15 or less has ever had a QB rating over 90, and very few QBs with a Wonderlic less than 25 have had successful careers. Yet above 25, the Wonderlic correlates poorly with NFL success.

Lesson #3 – Most metrics predict better in one range than another.

And this is probably the most important point.

If I went to the NFL Draft and ran the 40 yard dash, it wouldn’t be pretty. Scouts could spare themselves the trouble of watching my game film – they would know that I don’t have what it takes to play in the NFL on the basis of that metric alone.

However, for the players who run faster than a certain threshold, improving performance tells you very little. You have to be fast enough to play in the NFL, but among players who possess that already-elite speed, scouts have to start looking at other things to make more refined predictions.

This is especially true of cognitive metrics. In a piece that is both insightful and cutting, Nassim Nicholas Taleb reviewed how IQ tests have predictive value only on the lowest end of the scale. (He also points out how those who celebrate and defend these types of metrics are usually those who have a stake in the test.)

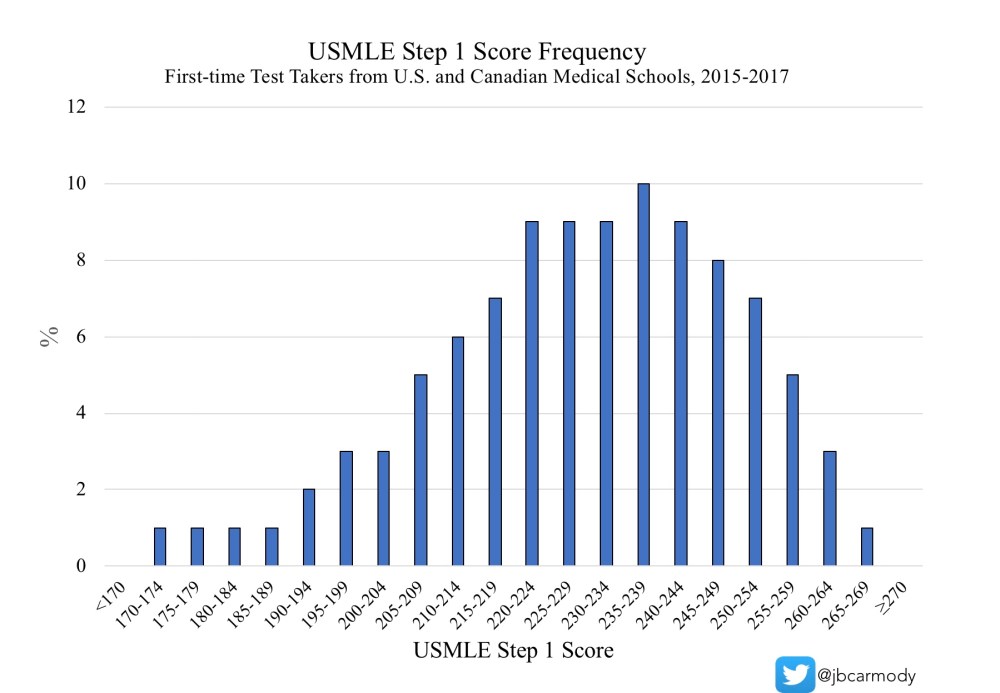

I’ve come to believe that USMLE Step 1 discriminates similarly to the IQ tests that Taleb decries. Yet, unlike IQ tests, which are norm-referenced tests intended to make distinctions among all candidates, the USMLE is a criterion-referenced test that is specifically designed to to identify the “minimally competent” candidate.

In terms of fulfilling its intended purpose, I think Step 1 does well. Even in the Information Age, a certain amount of medical knowledge is necessary to function as a physician, and candidates who do not possess that threshold might reasonably be expected to struggle in postgraduate training or clinical practice.

Figure. Distribution of USMLE Step 1 scores.

However, for candidates above that threshold, the Step 1 score probably gives you little useful information. Especially as Step 1 scores climb higher and higher, the facts a candidate must memorize to move from a 220 to a 240 or a 240 to a 260 are of more and more questionable significance. Instead of debating whether a 10 point difference in Step 1 score is meaningful (hint: it isn’t… expect more in a future post), we should start looking at other attributes to find the best candidates.

_

On the NFL, a pass/fail USMLE, and residency selection

I’m not an applicant. I’m not a program director. And I’m definitely not an expert on selection science. I got into the #USMLEPassFail debate because I care deeply about medical education – and Step 1 Mania is rotting it from the inside out.

Yet I realize that, for most of those who are vocal in this debate, it’s not a debate about medical education – it’s a debate about residency selection.

But if residency selection is your priority, it’s hard to argue that we’re doing it the right way.

We have given outsized importance to a single metric to do something that it was never designed to do – and probably does so poorly. And although residency programs can’t devote the same amount of time and resources to talent identification that NFL teams do – you can’t tell me that the current system is the best we can do.

The misalignment in medical education and residency selection

“All medical students care about is Step 1,” say medical school faculty who have made their curricula pass/fail, and who continue to charge the same exorbitant tuition even as students derive the majority of their preclinical education from First Aid, Pathoma, UWorld, and Sketchy.

“Medical school assessments are useless,” say program directors – the same ones who bemoan declining clinical preparedness among entering interns, and who wonder how they can produce well-trained physicians in an era of tightening duty hour restrictions and increasing EMR screentime.

Do you see the problem here?

There is a major misalignment between medical education and educational assessment that benefits no one.

Students – who are forced into an arms race with no natural end – lose. (Even those who match in their chosen discipline and program still lose by starting their career with too few real-world skills to show for their four-year medical education.)

Faculty – who have insights to provide and expertise to share that goes beyond First Aid – lose.

Residency programs – who want to train the best candidates, but have to triage more and more applications with fewer and fewer useful data points – lose.

The only winner is the test’s sponsor, who makes money hand over fist while we act like we’re stuck with this system. “Step 1 isn’t perfect, but it’s the best we’ve got…”

It’s not. It’s time for undergraduate and graduate medical education to work together on real solutions to these issues.

Interestingly, out of the 64 invitees to the recent InCUS meeting, do you know how many were officially representing the Accreditation Council for Graduate Medical Education (ACGME) – the body responsible for resident medical education?

One.

Compare that to 20 from the financially-interested NBME, FSMB, ECFMG, AMA, and AAMC.

If you care about residency selection – and more and more, I do – then the real issue isn’t just how the USMLE is scored. It’s the way that medical schools have abdicated their responsibility to provide (and residency programs, their responsibility to demand) meaningful student assessments.

We can take lessons from NFL talent scouts (and others) to create a system that provides more “game film” and meaningful data to residency program directors. Better yet, we can focus our educational efforts on teaching skills that matter – so that even candidates who ‘lose’ in the residency match process still gain skills that will help their patients and society. (When students spend all their time on Step 1 and end up in the SOAP, their consolation prize is a second choice career and some miscellaneous basic science trivia. Thanks for playing!)

Unfortunately, while Step 1 Mania has us in a chokehold, it’s hard for me to envision us summoning the wherewithal to change residency selection. A pass/fail USMLE gives us an opportunity to rebuild our selection processes. Necessity is the mother of invention, friends.

I believe making Step 1 pass/fail is a necessary – but not sufficient – step toward better assessment tools. Better assessments benefit everyone – and will make us more efficient in matching the right candidates with the right program.

Now, if that sounds like some kind of pie-in-the-sky dream, then I have one more thing to say.

Whether you support a pass/fail test or not, you should ask yourself: is the current system the best we can do? Are we teaching the most important skills to our future physicians? Are we efficiently identifying the traits we want in our future physicians?

I say no. And if you think we can do better, then it’s time to get to work.

YOU MIGHT ALSO LIKE:

The Lecture That Never Got to Be